Follow the Object: Curriculum Learning for Manipulation Tasks with Imagined Goals

Paper and Code

Aug 05, 2020

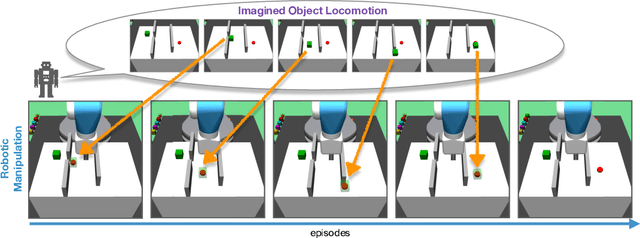

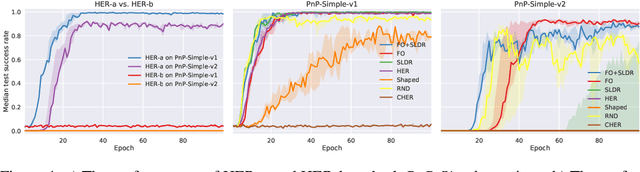

Learning robot manipulation through deep reinforcement learning in environments with sparse rewards is a challenging task. In this paper we address this problem by introducing a notion of imaginary object goals. For a given manipulation task, the object of interest is first trained to reach a desired target position on its own, without being manipulated, through physically realistic simulations. The object policy is then leveraged to build a predictive model of plausible object trajectories providing the robot with a curriculum of incrementally more difficult object goals to reach during training. The proposed algorithm, Follow the Object (FO), has been evaluated on 7 MuJoCo environments requiring increasing degree of exploration, and has achieved higher success rates compared to alternative algorithms. In particularly challenging learning scenarios, e.g. where the object's initial and target positions are far apart, our approach can still learn a policy whereas competing methods currently fail.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge