Flow-based Autoregressive Structured Prediction of Human Motion

Paper and Code

Apr 09, 2021

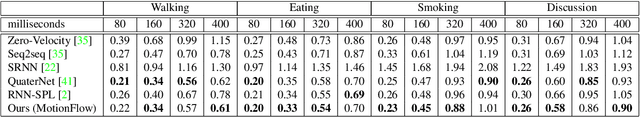

A new method is proposed for human motion predition by learning temporal and spatial dependencies in an end-to-end deep neural network. The joint connectivity is explicitly modeled using a novel autoregressive structured prediction representation based on flow-based generative models. We learn a latent space of complex body poses in consecutive frames which is conditioned on the high-dimensional structure input sequence. To construct each latent variable, the general and local smoothness of the joint positions are considered in a generative process using conditional normalizing flows. As a result, all frame-level and joint-level continuities in the sequence are preserved in the model. This enables us to parameterize the inter-frame and intra-frame relationships and joint connectivity for robust long-term predictions as well as short-term prediction. Our experiments on two challenging benchmark datasets of Human3.6M and AMASS demonstrate that our proposed method is able to effectively model the sequence information for motion prediction and outperform other techniques in 42 of the 48 total experiment scenarios to set a new state-of-the-art.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge