Federated Momentum Contrastive Clustering

Paper and Code

Jun 10, 2022

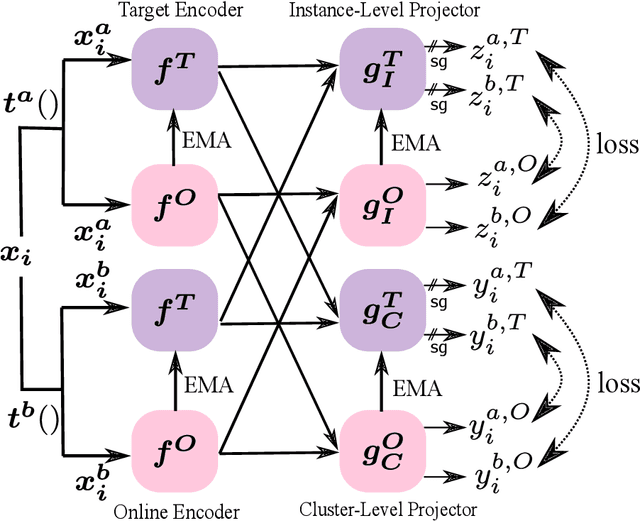

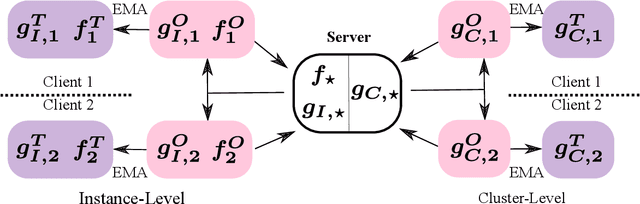

We present federated momentum contrastive clustering (FedMCC), a learning framework that can not only extract discriminative representations over distributed local data but also perform data clustering. In FedMCC, a transformed data pair passes through both the online and target networks, resulting in four representations over which the losses are determined. The resulting high-quality representations generated by FedMCC can outperform several existing self-supervised learning methods for linear evaluation and semi-supervised learning tasks. FedMCC can easily be adapted to ordinary centralized clustering through what we call momentum contrastive clustering (MCC). We show that MCC achieves state-of-the-art clustering accuracy results in certain datasets such as STL-10 and ImageNet-10. We also present a method to reduce the memory footprint of our clustering schemes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge