Feature Selection for multi-labeled variables via Dependency Maximization

Paper and Code

Feb 21, 2019

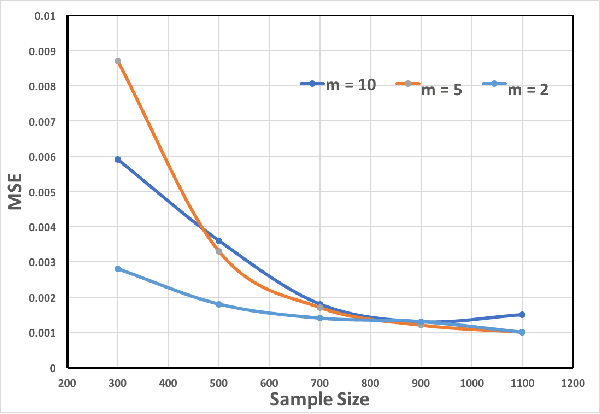

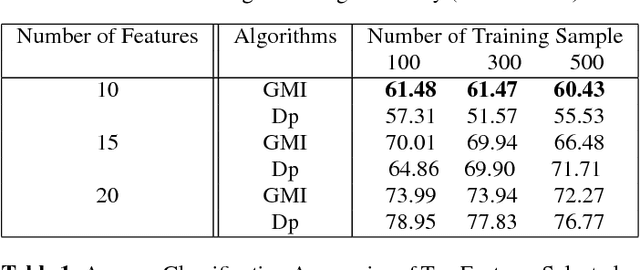

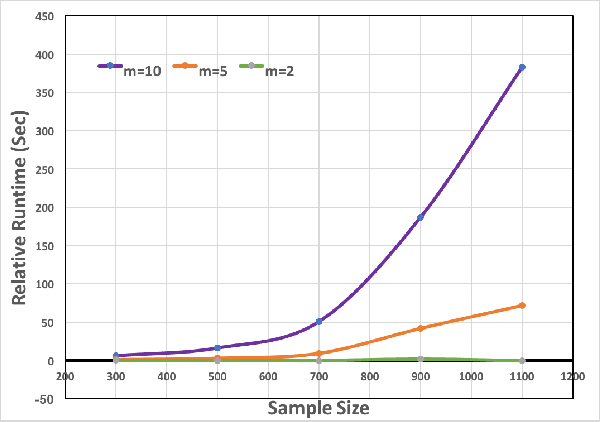

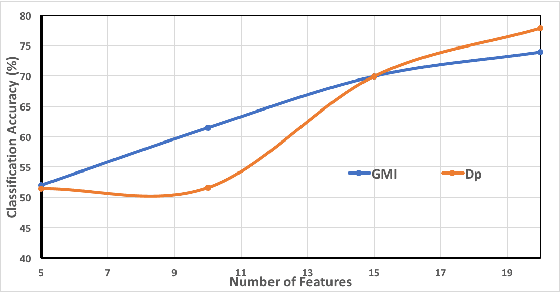

Feature selection and reducing the dimensionality of data is an essential step in data analysis. In this work, we propose a new criterion for feature selection that is formulated as conditional information between features given the labeled variable. Instead of using the standard mutual information measure based on Kullback-Leibler divergence, we use our proposed criterion to filter out redundant features for the purpose of multiclass classification. This approach results in an efficient and fast non-parametric implementation of feature selection as it can be directly estimated using a geometric measure of dependency, the global Friedman-Rafsky (FR) multivariate run test statistic constructed by a global minimal spanning tree (MST). We demonstrate the advantages of our proposed feature selection approach through simulation. In addition the proposed feature selection method is applied to the MNIST data set.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge