Feature Fusion Detector for Semantic Cognition of Remote Sensing

Paper and Code

Sep 28, 2019

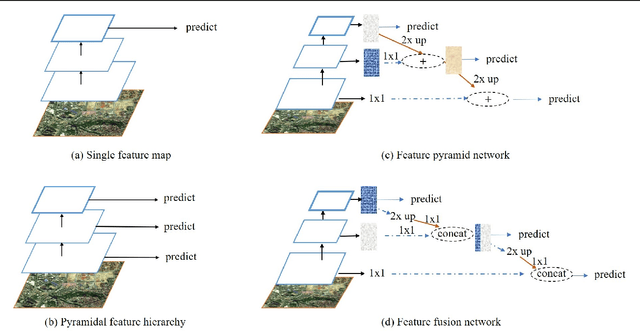

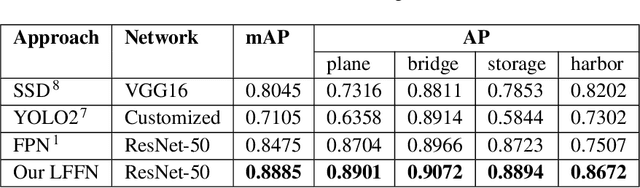

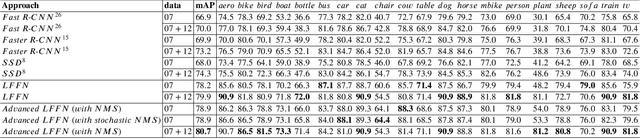

The value of remote sensing images is of vital importance in many areas and needs to be refined by some cognitive approaches. The remote sensing detection is an appropriate way to achieve the semantic cognition. However, such detection is a challenging issue for scale diversity, diversity of views, small objects, sophisticated light and shadow backgrounds. In this article, inspired by the state-of-the-art detection framework FPN, we propose a novel approach for constructing a feature fusion module that optimizes feature context utilization in detection, calling our system LFFN for Layer-weakening Feature Fusion Network. We explore the inherent relevance of different layers to the final decision, and the incentives of higher-level features to lower-level features. More importantly, we explore the characteristics of different backbone networks in the mining of basic features and the correlation utilization of convolutional channels, and call our upgraded version as advanced LFFN. Based on experiments on the remote sensing dataset from Google Earth, our LFFN has proved effective and practical for the semantic cognition of remote sensing, achieving 89% mAP which is 4.1% higher than that of FPN. Moreover, in terms of the generalization performance, LFFN achieves 79.9% mAP on VOC 2007 and achieves 73.0% mAP on VOC 2012 test, and advacned LFFN obtains the mAP values of 80.7% and 74.4% on VOC 2007 and 2012 respectively, outperforming the comparable state-of-the-art SSD and Faster R-CNN models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge