Feature Chirality in Deep Learning Models

Paper and Code

May 06, 2023

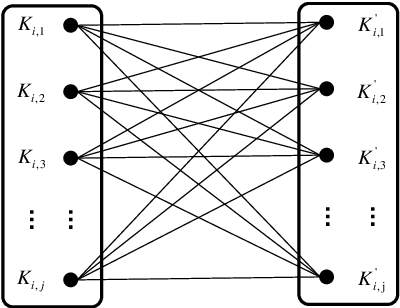

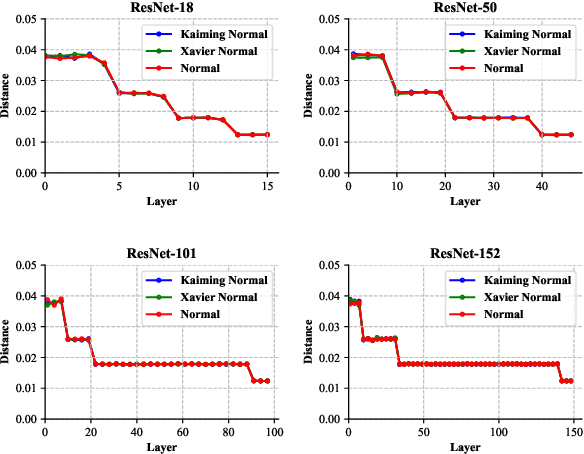

As deep learning applications extensively increase by leaps and bounds, their interpretability has become increasingly prominent. As a universal property, chirality exists widely in nature, and applying it to the explanatory research of deep learning may be helpful to some extent. Inspired by a recent study that used CNN (convolutional neural network), which applied visual chirality, to distinguish whether an image is flipped or not. In this paper, we study feature chirality innovatively, which shows how the statistics of deep learning models' feature data are changed by training. We rethink the feature-level chirality property, propose the feature chirality, and give the measure. Our analysis of feature chirality on AlexNet, VGG, and ResNet reveals similar but surprising results, including the prevalence of feature chirality in these models, the initialization methods of the models do not affect feature chirality. Our work shows that feature chirality implies model evaluation, interpretability of the model, and model parameters optimization.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge