Fast Optimal Estimation with Intractable Models using Permutation-Invariant Neural Networks

Paper and Code

Aug 27, 2022

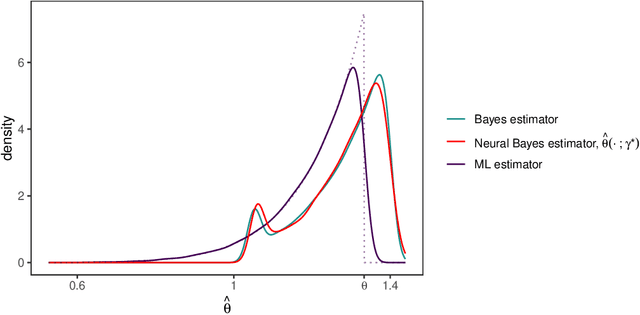

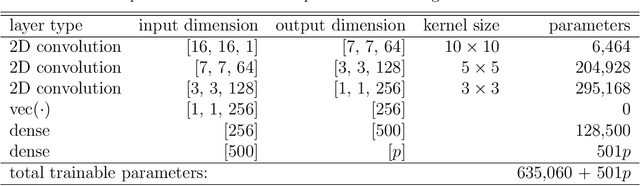

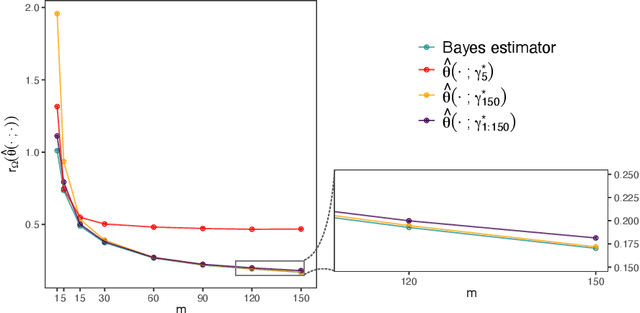

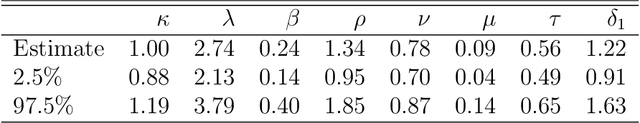

Neural networks have recently shown promise for likelihood-free inference, providing orders-of-magnitude speed-ups over classical methods. However, current implementations are suboptimal when estimating parameters from independent replicates. In this paper, we use a decision-theoretic framework to argue that permutation-invariant neural networks are ideally placed for constructing Bayes estimators for arbitrary models, provided that simulation from these models is straightforward. We illustrate the potential of these estimators on both conventional spatial models, as well as highly parameterised spatial-extremes models, and show that they considerably outperform neural estimators that do not account for replication appropriately in their network design. At the same time, they are highly competitive and much faster than traditional likelihood-based estimators. We apply our estimator on a spatial analysis of sea-surface temperature in the Red Sea where, after training, we obtain parameter estimates, and uncertainty quantification of the estimates via bootstrap sampling, from hundreds of spatial fields in a fraction of a second.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge