Fast Online EM for Big Topic Modeling

Paper and Code

Dec 07, 2015

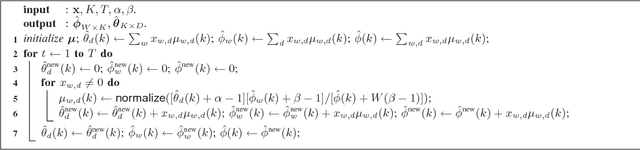

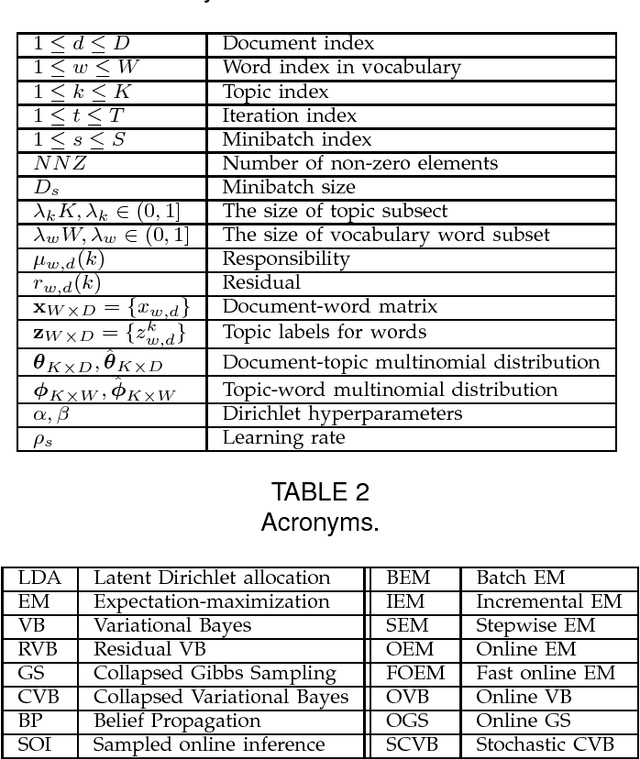

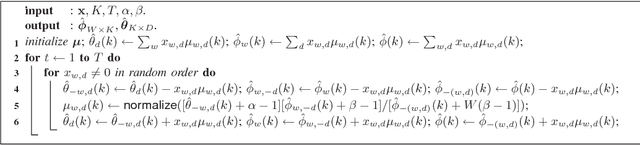

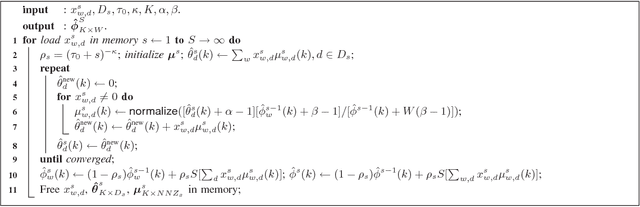

The expectation-maximization (EM) algorithm can compute the maximum-likelihood (ML) or maximum a posterior (MAP) point estimate of the mixture models or latent variable models such as latent Dirichlet allocation (LDA), which has been one of the most popular probabilistic topic modeling methods in the past decade. However, batch EM has high time and space complexities to learn big LDA models from big data streams. In this paper, we present a fast online EM (FOEM) algorithm that infers the topic distribution from the previously unseen documents incrementally with constant memory requirements. Within the stochastic approximation framework, we show that FOEM can converge to the local stationary point of the LDA's likelihood function. By dynamic scheduling for the fast speed and parameter streaming for the low memory usage, FOEM is more efficient for some lifelong topic modeling tasks than the state-of-the-art online LDA algorithms to handle both big data and big models (aka, big topic modeling) on just a PC.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge