Eyes on the Prize: Improved Perception for Robust Dynamic Grasping

Paper and Code

Apr 29, 2022

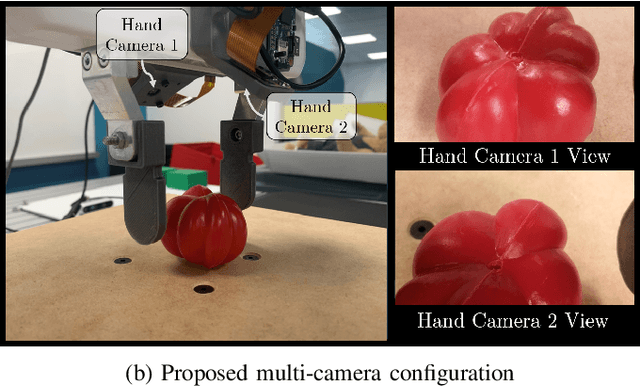

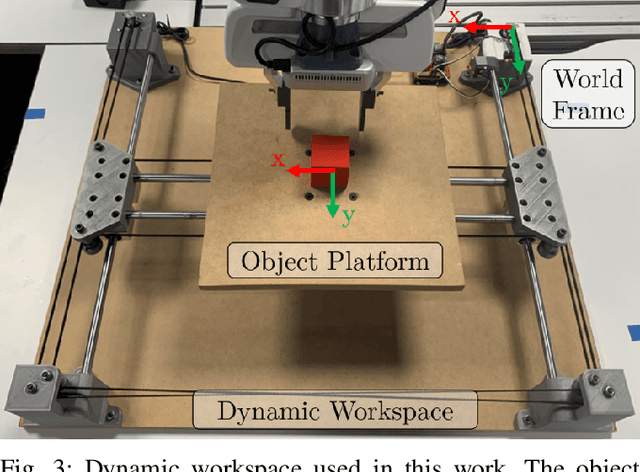

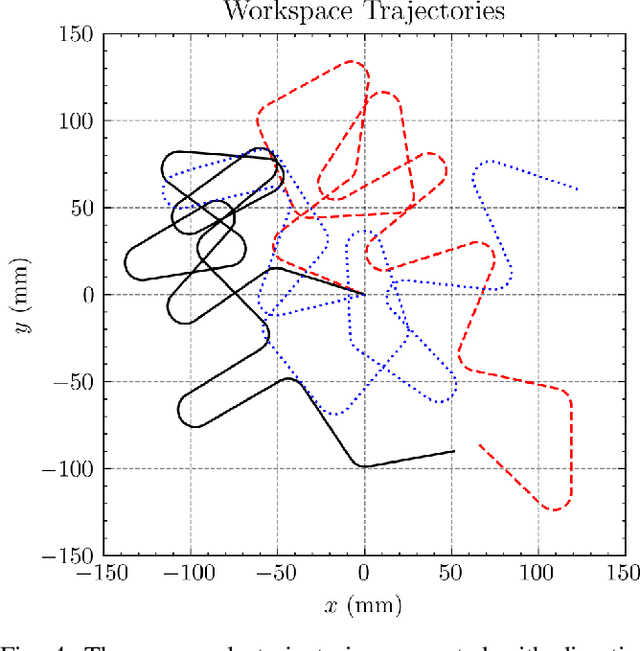

This paper is concerned with perception challenges for robust grasping in the presence of clutter and unpredictable relative motion between robot and object. Traditional perception systems developed for static grasping are unable to provide feedback during the final phase of a grasp due to sensor minimum range, occlusion, and a limited field of view. A multi-camera eye-in-hand perception system is presented that has advantages over commonly used camera configurations. We quantitatively evaluate the performance on a real robot with an image-based visual servoing grasp controller and show a significantly improved success rate on a dynamic grasping task. A fully reproducible open-source testing system is described to encourage benchmarking of dynamic grasping system performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge