Exploring Optimal Control With Observations at a Cost

Paper and Code

Jun 29, 2020

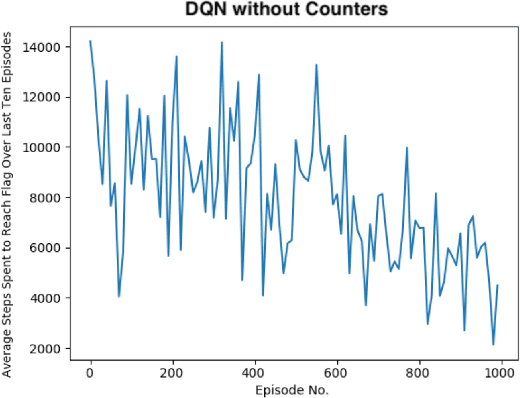

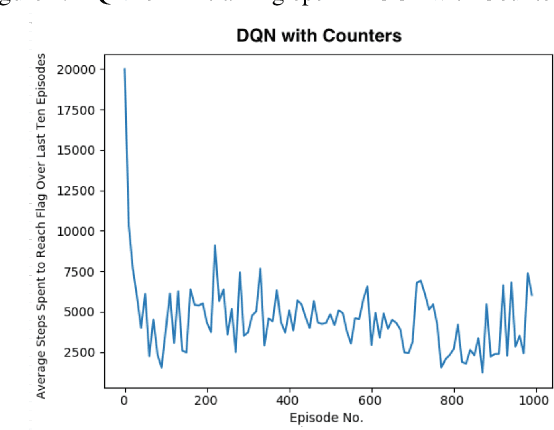

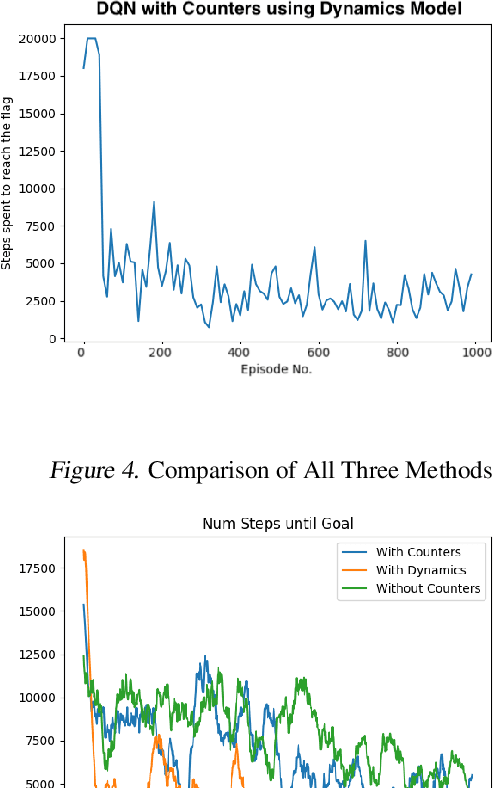

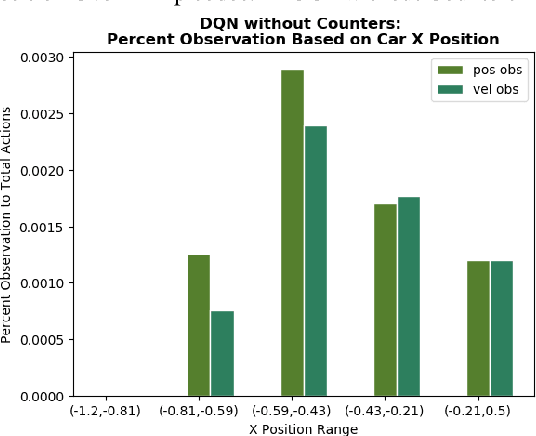

There has been a current trend in reinforcement learning for healthcare literature, where in order to prepare clinical datasets, researchers will carry forward the last results of the non-administered test known as the last-observation-carried-forward (LOCF) value to fill in gaps, assuming that it is still an accurate indicator of the patient's current state. These values are carried forward without maintaining information about exactly how these values were imputed, leading to ambiguity. Our approach models this problem using OpenAI Gym's Mountain Car and aims to address when to observe the patient's physiological state and partly how to intervene, as we have assumed we can only act after following an observation. So far, we have found that for a last-observation-carried-forward implementation of the state space, augmenting the state with counters for each state variable tracking the time since last observation was made, improves the predictive performance of an agent, supporting the notion of "informative missingness", and using a neural network based Dynamics Model to predict the most probable next state value of non-observed state variables instead of carrying forward the last observed value through LOCF further improves the agent's performance, leading to faster convergence and reduced variance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge