Explore the Knowledge contained in Network Weights to Obtain Sparse Neural Networks

Paper and Code

Mar 26, 2021

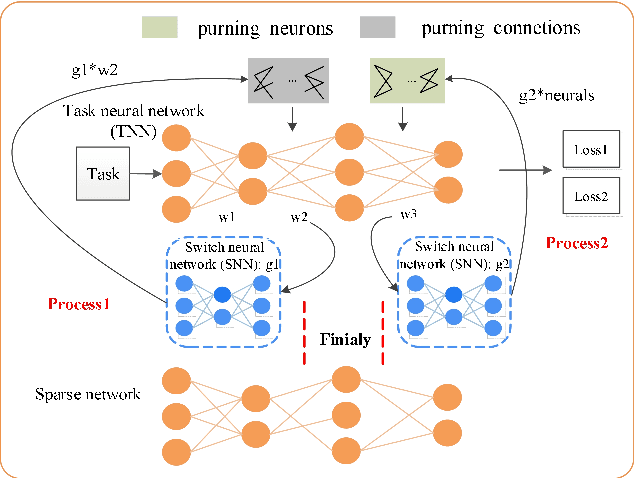

Sparse neural networks are important for achieving better generalization and enhancing computation efficiency. This paper proposes a novel learning approach to obtain sparse fully connected layers in neural networks (NNs) automatically. We design a switcher neural network (SNN) to optimize the structure of the task neural network (TNN). The SNN takes the weights of the TNN as the inputs and its outputs are used to switch the connections of TNN. In this way, the knowledge contained in the weights of TNN is explored to determine the importance of each connection and the structure of TNN consequently. The SNN and TNN are learned alternately with stochastic gradient descent (SGD) optimization, targeting at a common objective. After learning, we achieve the optimal structure and the optimal parameters of the TNN simultaneously. In order to evaluate the proposed approach, we conduct image classification experiments on various network structures and datasets. The network structures include LeNet, ResNet18, ResNet34, VggNet16 and MobileNet. The datasets include MNIST, CIFAR10 and CIFAR100. The experimental results show that our approach can stably lead to sparse and well-performing fully connected layers in NNs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge