Explaining the Adaptive Generalisation Gap

Paper and Code

Nov 15, 2020

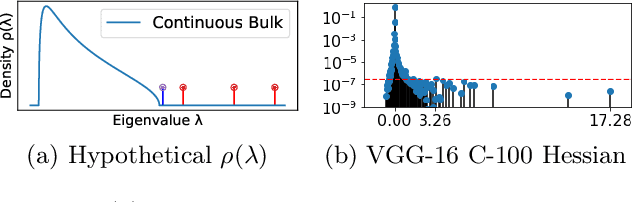

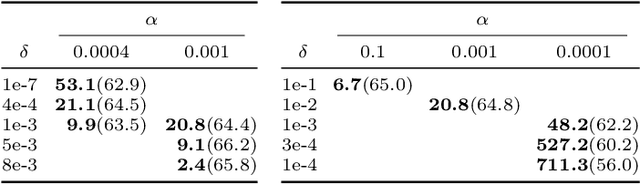

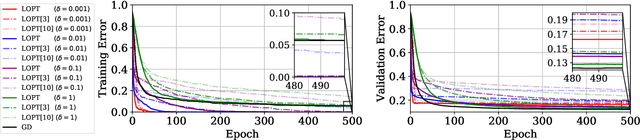

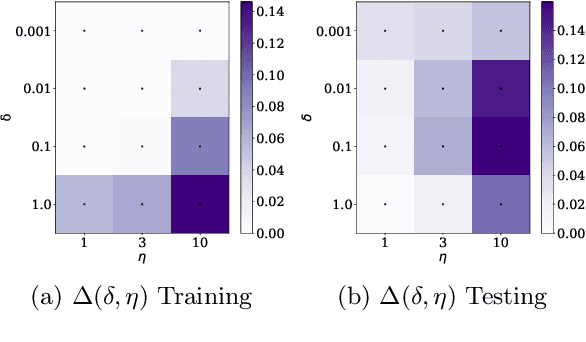

We conjecture that the reason for the difference in generalisation between adaptive and non adaptive gradient methods stems from the failure of adaptive methods to account for the greater levels of noise associated with flatter directions in their estimates of local curvature. This conjecture motivated by results in random matrix theory has implications for optimisation in both simple convex settings and deep neural networks. We demonstrate that typical schedules used for adaptive methods (with low numerical stability or damping constants) serve to bias relative movement towards flat directions relative to sharp directions, effectively amplifying the noise-to-signal ratio and harming generalisation. We show that the numerical stability/damping constant used in these methods can be decomposed into a learning rate reduction and linear shrinkage of the estimated curvature matrix. We then demonstrate significant generalisation improvements by increasing the shrinkage coefficient, closing the generalisation gap entirely in our neural network experiments. Finally, we show that other popular modifications to adaptive methods, such as decoupled weight decay and partial adaptivity can be shown to calibrate parameter updates to make better use of sharper, more reliable directions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge