Evolutionary Echo State Network: evolving reservoirs in the Fourier space

Paper and Code

Jun 10, 2022

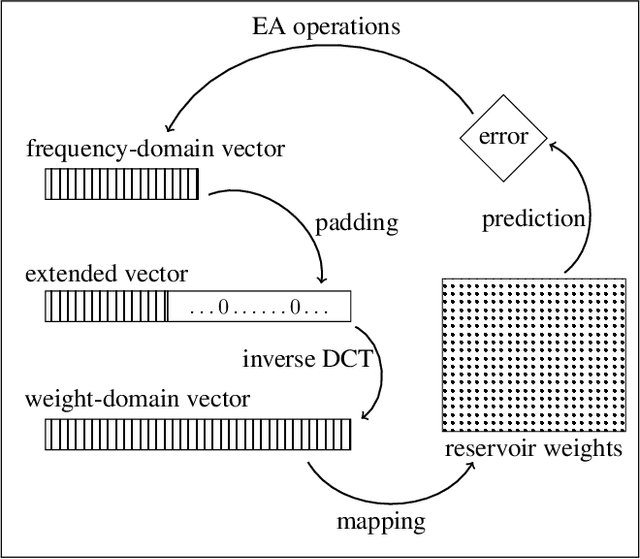

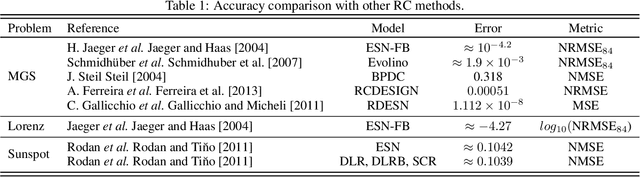

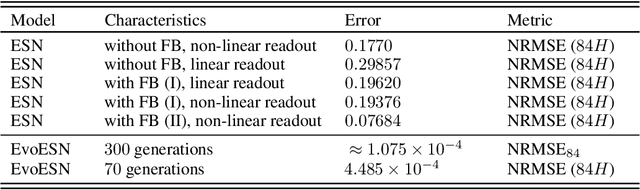

The Echo State Network (ESN) is a class of Recurrent Neural Network with a large number of hidden-hidden weights (in the so-called reservoir). Canonical ESN and its variations have recently received significant attention due to their remarkable success in the modeling of non-linear dynamical systems. The reservoir is randomly connected with fixed weights that don't change in the learning process. Only the weights from reservoir to output are trained. Since the reservoir is fixed during the training procedure, we may wonder if the computational power of the recurrent structure is fully harnessed. In this article, we propose a new computational model of the ESN type, that represents the reservoir weights in the Fourier space and performs a fine-tuning of these weights applying genetic algorithms in the frequency domain. The main interest is that this procedure will work in a much smaller space compared to the classical ESN, thus providing a dimensionality reduction transformation of the initial method. The proposed technique allows us to exploit the benefits of the large recurrent structure avoiding the training problems of gradient-based method. We provide a detailed experimental study that demonstrates the good performances of our approach with well-known chaotic systems and real-world data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge