Evaluation of Automated Image Descriptions for Visually Impaired Students

Paper and Code

Jun 29, 2021

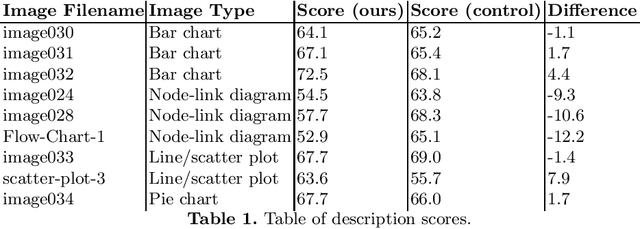

Illustrations are widely used in education, and sometimes, alternatives are not available for visually impaired students. Therefore, those students would benefit greatly from an automatic illustration description system, but only if those descriptions were complete, correct, and easily understandable using a screenreader. In this paper, we report on a study for the assessment of automated image descriptions. We interviewed experts to establish evaluation criteria, which we then used to create an evaluation questionnaire for sighted non-expert raters, and description templates. We used this questionnaire to evaluate the quality of descriptions which could be generated with a template-based automatic image describer. We present evidence that these templates have the potential to generate useful descriptions, and that the questionnaire identifies problems with description templates.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge