Evaluating subgroup disparity using epistemic uncertainty in mammography

Paper and Code

Jul 15, 2021

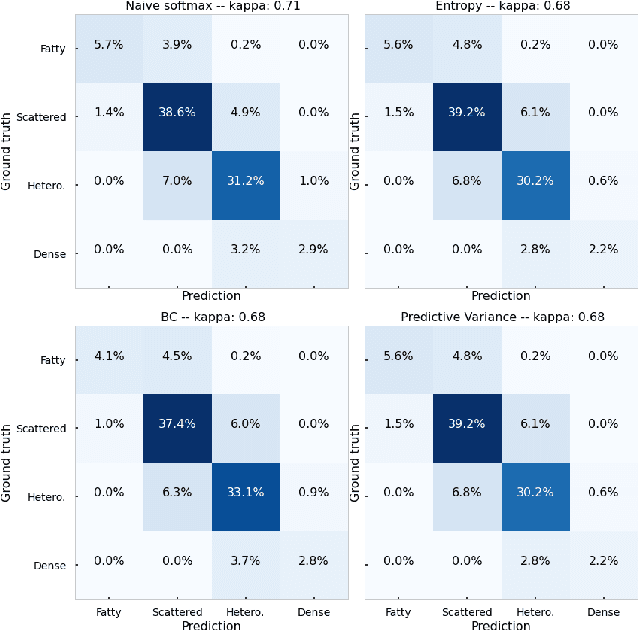

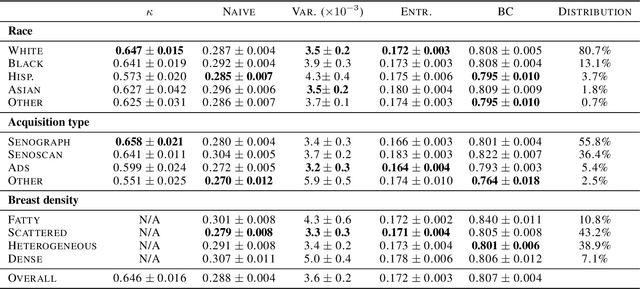

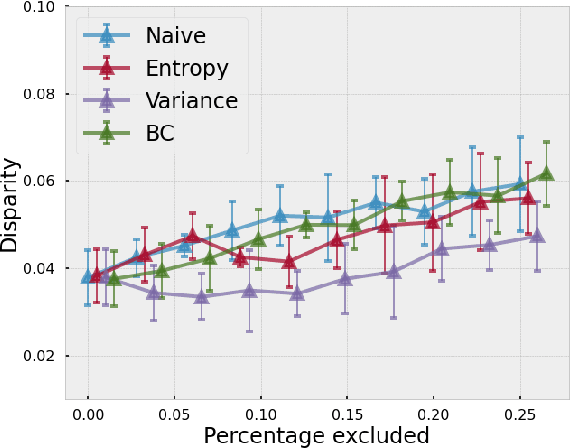

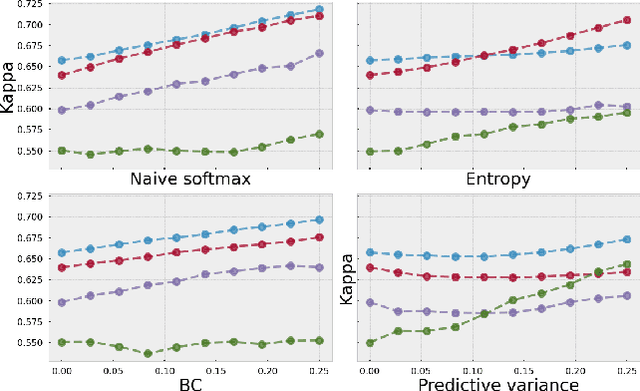

As machine learning (ML) continue to be integrated into healthcare systems that affect clinical decision making, new strategies will need to be incorporated in order to effectively detect and evaluate subgroup disparities to ensure accountability and generalizability in clinical workflows. In this paper, we explore how epistemic uncertainty can be used to evaluate disparity in patient demographics (race) and data acquisition (scanner) subgroups for breast density assessment on a dataset of 108,190 mammograms collected from 33 clinical sites. Our results show that even if aggregate performance is comparable, the choice of uncertainty quantification metric can significantly the subgroup level. We hope this analysis can promote further work on how uncertainty can be leveraged to increase transparency of machine learning applications for clinical deployment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge