Evaluating Explainable Methods for Predictive Process Analytics: A Functionally-Grounded Approach

Paper and Code

Dec 08, 2020

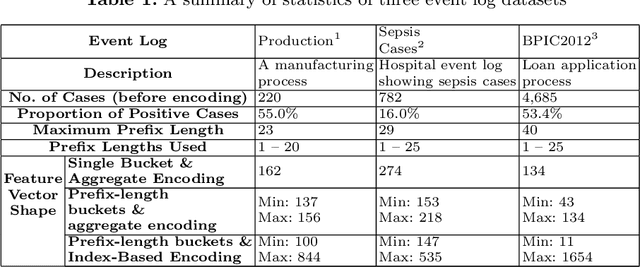

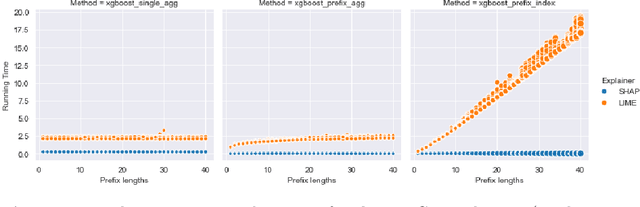

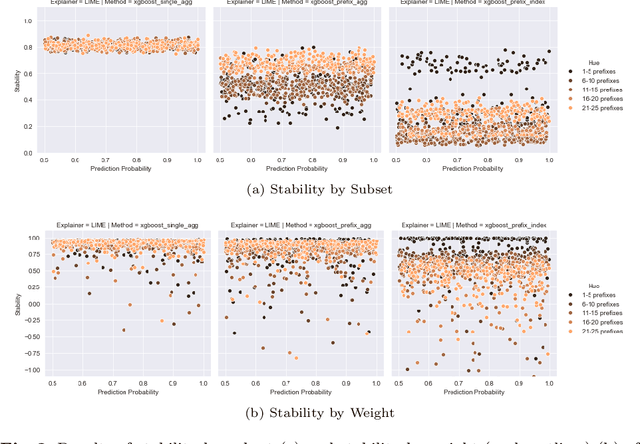

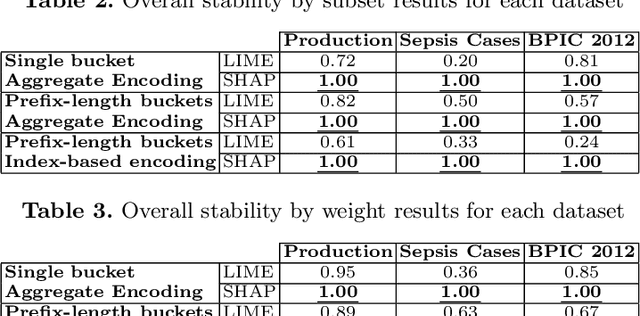

Predictive process analytics focuses on predicting the future states of running instances of a business process. While advanced machine learning techniques have been used to increase accuracy of predictions, the resulting predictive models lack transparency. Current explainable machine learning methods, such as LIME and SHAP, can be used to interpret black box models. However, it is unclear how fit for purpose these methods are in explaining process predictive models. In this paper, we draw on evaluation measures used in the field of explainable AI and propose functionally-grounded evaluation metrics for assessing explainable methods in predictive process analytics. We apply the proposed metrics to evaluate the performance of LIME and SHAP in interpreting process predictive models built on XGBoost, which has been shown to be relatively accurate in process predictions. We conduct the evaluation using three open source, real-world event logs and analyse the evaluation results to derive insights. The research contributes to understanding the trustworthiness of explainable methods for predictive process analytics as a fundamental and key step towards human user-oriented evaluation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge