Evaluating Error Bound for Physics-Informed Neural Networks on Linear Dynamical Systems

Paper and Code

Jul 03, 2022

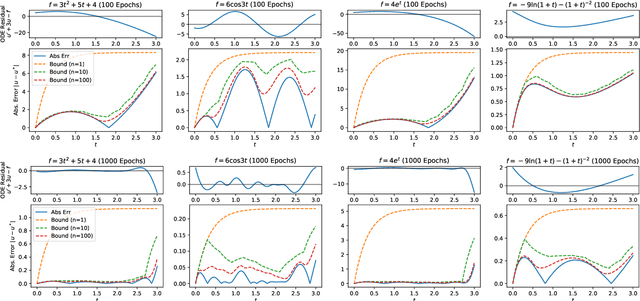

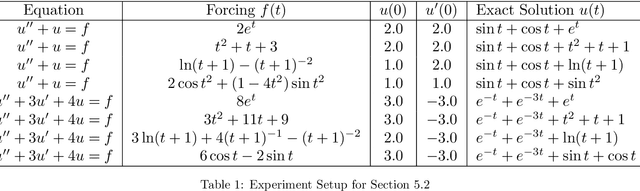

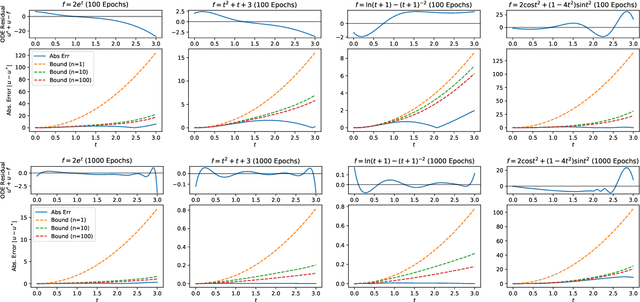

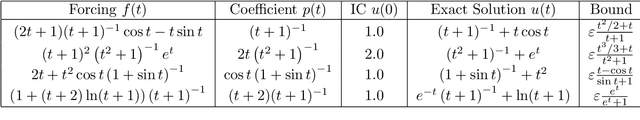

There have been extensive studies on solving differential equations using physics-informed neural networks. While this method has proven advantageous in many cases, a major criticism lies in its lack of analytical error bounds. Therefore, it is less credible than its traditional counterparts, such as the finite difference method. This paper shows that one can mathematically derive explicit error bounds for physics-informed neural networks trained on a class of linear systems of differential equations. More importantly, evaluating such error bounds only requires evaluating the differential equation residual infinity norm over the domain of interest. Our work shows a link between network residuals, which is known and used as loss function, and the absolute error of solution, which is generally unknown. Our approach is semi-phenomonological and independent of knowledge of the actual solution or the complexity or architecture of the network. Using the method of manufactured solution on linear ODEs and system of linear ODEs, we empirically verify the error evaluation algorithm and demonstrate that the actual error strictly lies within our derived bound.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge