Evaluating adversarial robustness in simulated cerebellum

Paper and Code

Dec 05, 2020

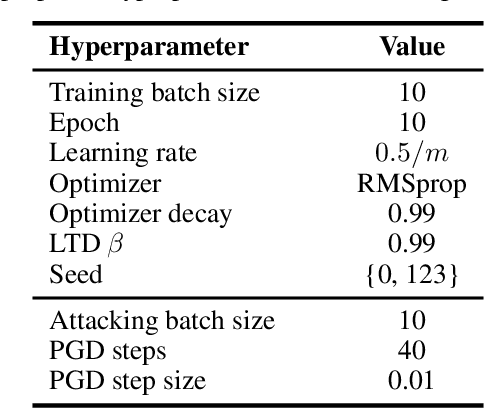

It is well known that artificial neural networks are vulnerable to adversarial examples, in which great efforts have been made to improve the robustness. However, such examples are usually imperceptible to humans, thus their effect on biological neural circuits is largely unknown. This paper will investigate the adversarial robustness in a simulated cerebellum, a well-studied supervised learning system in computational neuroscience. Specifically, we propose to study three unique characteristics revealed in the cerebellum: (i) network width; (ii) long-term depression on the parallel fiber-Purkinje cell synapses; (iii) sparse connectivity in the granule layer, and hypothesize that they will be beneficial for improving robustness. To the best of our knowledge, this is the first attempt to examine the adversarial robustness in simulated cerebellum models. We wish to remark that both of the positive and negative results are indeed meaningful -- if the answer is in the affirmative, engineering insights are gained from the biological model into designing more robust learning systems; otherwise, neuroscientists are encouraged to fool the biological system in experiments with adversarial attacks -- which makes the project especially suitable for a pre-registration study.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge