Error Compensation Framework for Flow-Guided Video Inpainting

Paper and Code

Jul 21, 2022

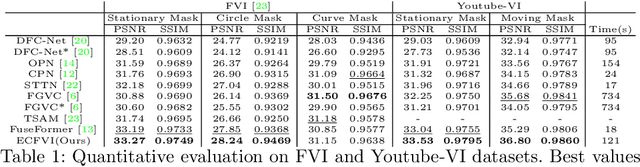

The key to video inpainting is to use correlation information from as many reference frames as possible. Existing flow-based propagation methods split the video synthesis process into multiple steps: flow completion -> pixel propagation -> synthesis. However, there is a significant drawback that the errors in each step continue to accumulate and amplify in the next step. To this end, we propose an Error Compensation Framework for Flow-guided Video Inpainting (ECFVI), which takes advantage of the flow-based method and offsets its weaknesses. We address the weakness with the newly designed flow completion module and the error compensation network that exploits the error guidance map. Our approach greatly improves the temporal consistency and the visual quality of the completed videos. Experimental results show the superior performance of our proposed method with the speed up of x6, compared to the state-of-the-art methods. In addition, we present a new benchmark dataset for evaluation by supplementing the weaknesses of existing test datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge