Equal Confusion Fairness: Measuring Group-Based Disparities in Automated Decision Systems

Paper and Code

Jul 02, 2023

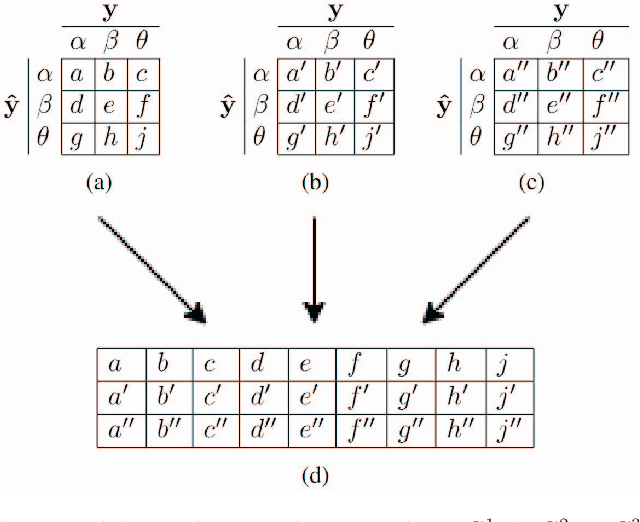

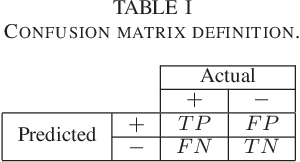

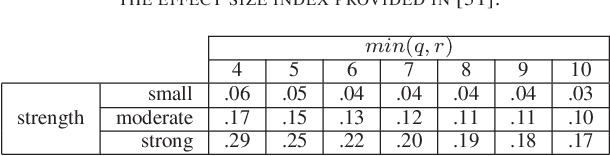

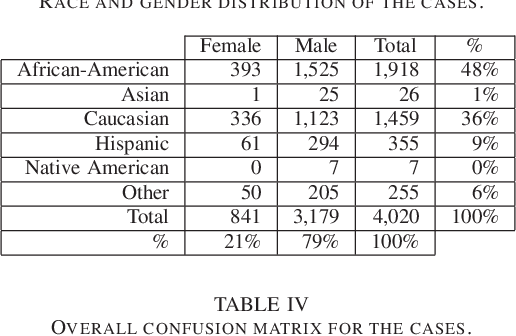

As artificial intelligence plays an increasingly substantial role in decisions affecting humans and society, the accountability of automated decision systems has been receiving increasing attention from researchers and practitioners. Fairness, which is concerned with eliminating unjust treatment and discrimination against individuals or sensitive groups, is a critical aspect of accountability. Yet, for evaluating fairness, there is a plethora of fairness metrics in the literature that employ different perspectives and assumptions that are often incompatible. This work focuses on group fairness. Most group fairness metrics desire a parity between selected statistics computed from confusion matrices belonging to different sensitive groups. Generalizing this intuition, this paper proposes a new equal confusion fairness test to check an automated decision system for fairness and a new confusion parity error to quantify the extent of any unfairness. To further analyze the source of potential unfairness, an appropriate post hoc analysis methodology is also presented. The usefulness of the test, metric, and post hoc analysis is demonstrated via a case study on the controversial case of COMPAS, an automated decision system employed in the US to assist judges with assessing recidivism risks. Overall, the methods and metrics provided here may assess automated decision systems' fairness as part of a more extensive accountability assessment, such as those based on the system accountability benchmark.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge