Empirical Study of Overfitting in Deep FNN Prediction Models for Breast Cancer Metastasis

Paper and Code

Aug 03, 2022

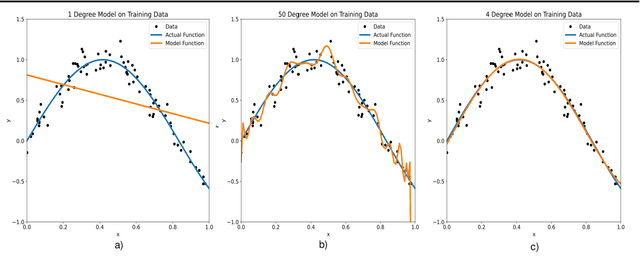

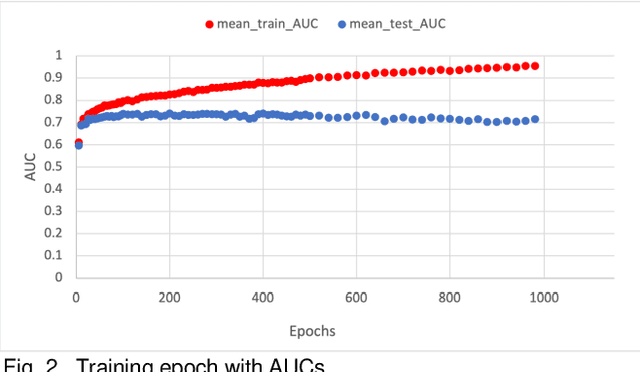

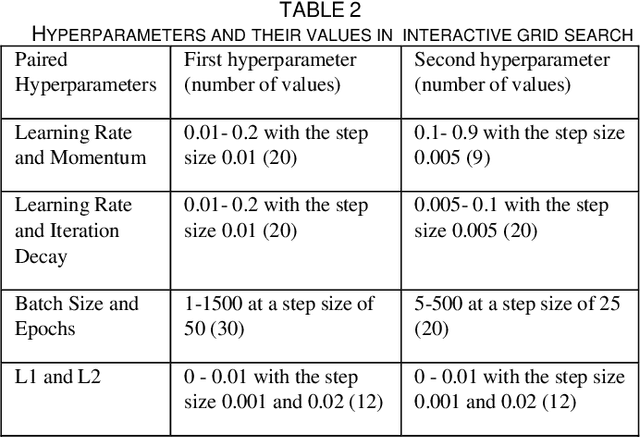

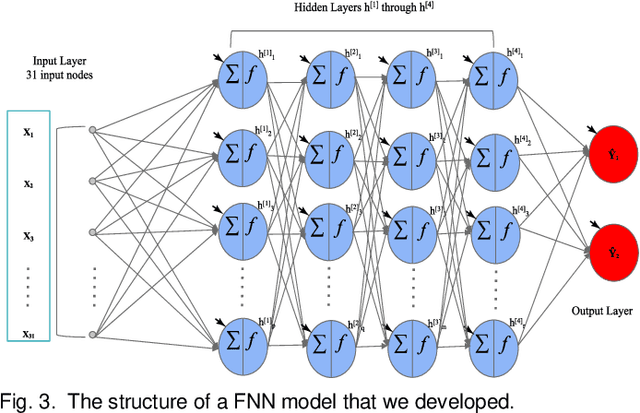

Overfitting is defined as the fact that the current model fits a specific data set perfectly, resulting in weakened generalization, and ultimately may affect the accuracy in predicting future data. In this research we used an EHR dataset concerning breast cancer metastasis to study overfitting of deep feedforward Neural Networks (FNNs) prediction models. We included 11 hyperparameters of the deep FNNs models and took an empirical approach to study how each of these hyperparameters was affecting both the prediction performance and overfitting when given a large range of values. We also studied how some of the interesting pairs of hyperparameters were interacting to influence the model performance and overfitting. The 11 hyperparameters we studied include activate function; weight initializer, number of hidden layers, learning rate, momentum, decay, dropout rate, batch size, epochs, L1, and L2. Our results show that most of the single hyperparameters are either negatively or positively corrected with model prediction performance and overfitting. In particular, we found that overfitting overall tends to negatively correlate with learning rate, decay, batch sides, and L2, but tends to positively correlate with momentum, epochs, and L1. According to our results, learning rate, decay, and batch size may have a more significant impact on both overfitting and prediction performance than most of the other hyperparameters, including L1, L2, and dropout rate, which were designed for minimizing overfitting. We also find some interesting interacting pairs of hyperparameters such as learning rate and momentum, learning rate and decay, and batch size and epochs. Keywords: Deep learning, overfitting, prediction, grid search, feedforward neural networks, breast cancer metastasis.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge