Embedding models for recommendation under contextual constraints

Paper and Code

Jun 21, 2019

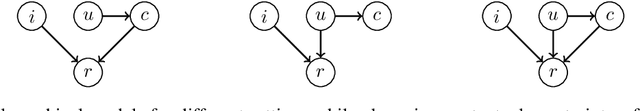

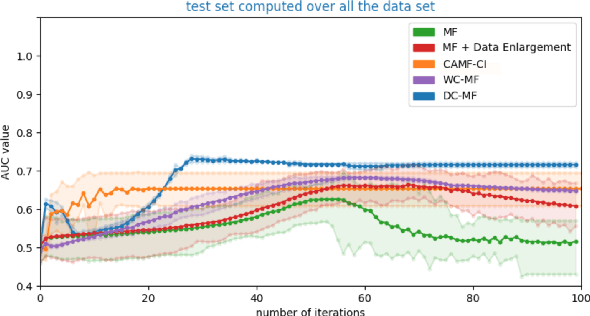

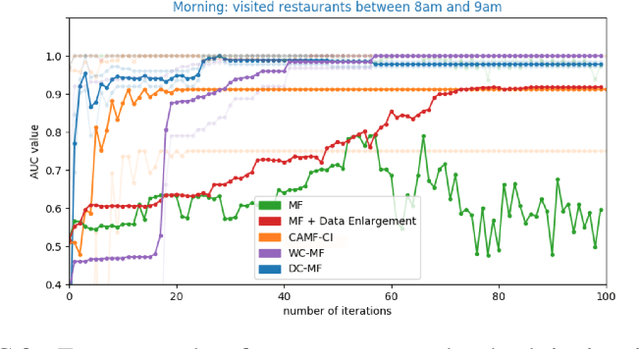

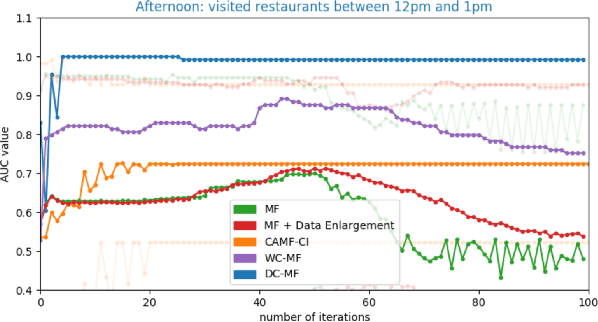

Embedding models, which learn latent representations of users and items based on user-item interaction patterns, are a key component of recommendation systems. In many applications, contextual constraints need to be applied to refine recommendations, e.g. when a user specifies a price range or product category filter. The conventional approach, for both context-aware and standard models, is to retrieve items and apply the constraints as independent operations. The order in which these two steps are executed can induce significant problems. For example, applying constraints a posteriori can result in incomplete recommendations or low-quality results for the tail of the distribution (i.e., less popular items). As a result, the additional information that the constraint brings about user intent may not be accurately captured. In this paper we propose integrating the information provided by the contextual constraint into the similarity computation, by merging constraint application and retrieval into one operation in the embedding space. This technique allows us to generate high-quality recommendations for the specified constraint. Our approach learns constraints representations jointly with the user and item embeddings. We incorporate our methods into a matrix factorization model, and perform an experimental evaluation on one internal and two real-world datasets. Our results show significant improvements in predictive performance compared to context-aware and standard models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge