Efficient Agnostic Learning with Average Smoothness

Paper and Code

Sep 29, 2023

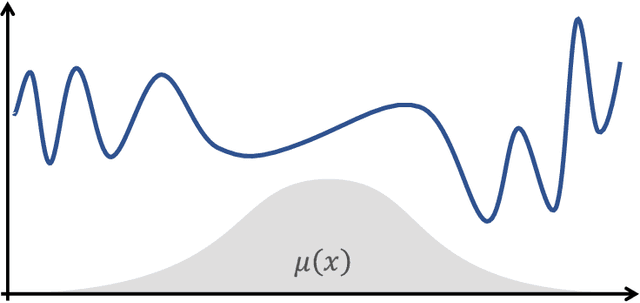

We study distribution-free nonparametric regression following a notion of average smoothness initiated by Ashlagi et al. (2021), which measures the "effective" smoothness of a function with respect to an arbitrary unknown underlying distribution. While the recent work of Hanneke et al. (2023) established tight uniform convergence bounds for average-smooth functions in the realizable case and provided a computationally efficient realizable learning algorithm, both of these results currently lack analogs in the general agnostic (i.e. noisy) case. In this work, we fully close these gaps. First, we provide a distribution-free uniform convergence bound for average-smoothness classes in the agnostic setting. Second, we match the derived sample complexity with a computationally efficient agnostic learning algorithm. Our results, which are stated in terms of the intrinsic geometry of the data and hold over any totally bounded metric space, show that the guarantees recently obtained for realizable learning of average-smooth functions transfer to the agnostic setting. At the heart of our proof, we establish the uniform convergence rate of a function class in terms of its bracketing entropy, which may be of independent interest.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge