Effective Distant Supervision for Temporal Relation Extraction

Paper and Code

Oct 24, 2020

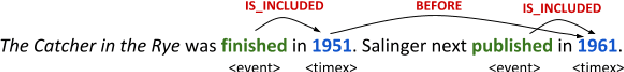

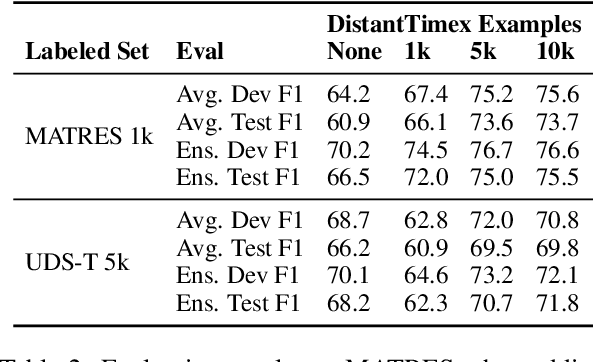

A principal barrier to training temporal relation extraction models in new domains is the lack of varied, high quality examples and the challenge of collecting more. We present a method of automatically collecting distantly-supervised examples of temporal relations. We scrape and automatically label event pairs where the temporal relations are made explicit in text, then mask out those explicit cues, forcing a model trained on this data to learn other signals. We demonstrate that a pre-trained Transformer model is able to transfer from the weakly labeled examples to human-annotated benchmarks in both zero-shot and few-shot settings, and that the masking scheme is important in improving generalization.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge