DSSRNN: Decomposition-Enhanced State-Space Recurrent Neural Network for Time-Series Analysis

Paper and Code

Dec 01, 2024

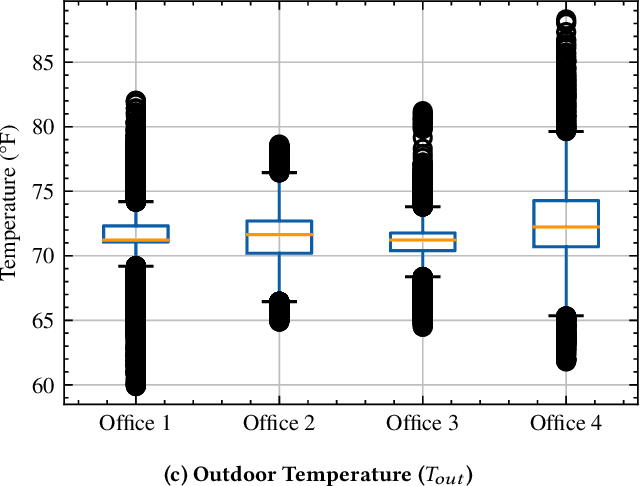

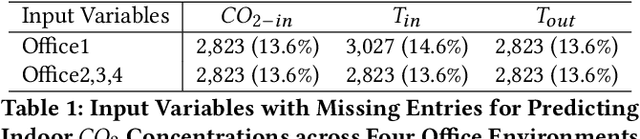

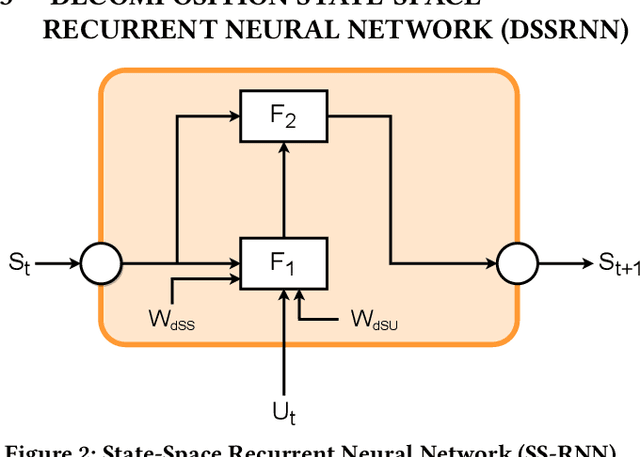

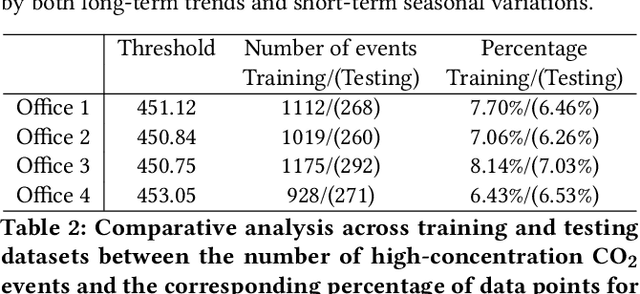

Time series forecasting is a crucial yet challenging task in machine learning, requiring domain-specific knowledge due to its wide-ranging applications. While recent Transformer models have improved forecasting capabilities, they come with high computational costs. Linear-based models have shown better accuracy than Transformers but still fall short of ideal performance. To address these challenges, we introduce the Decomposition State-Space Recurrent Neural Network (DSSRNN), a novel framework designed for both long-term and short-term time series forecasting. DSSRNN uniquely combines decomposition analysis to capture seasonal and trend components with state-space models and physics-based equations. We evaluate DSSRNN's performance on indoor air quality datasets, focusing on CO2 concentration prediction across various forecasting horizons. Results demonstrate that DSSRNN consistently outperforms state-of-the-art models, including transformer-based architectures, in terms of both Mean Squared Error (MSE) and Mean Absolute Error (MAE). For example, at the shortest horizon (T=96) in Office 1, DSSRNN achieved an MSE of 0.378 and an MAE of 0.401, significantly lower than competing models. Additionally, DSSRNN exhibits superior computational efficiency compared to more complex models. While not as lightweight as the DLinear model, DSSRNN achieves a balance between performance and efficiency, with only 0.11G MACs and 437MiB memory usage, and an inference time of 0.58ms for long-term forecasting. This work not only showcases DSSRNN's success but also establishes a new benchmark for physics-informed machine learning in environmental forecasting and potentially other domains.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge