Domain Specific, Semi-Supervised Transfer Learning for Medical Imaging

Paper and Code

May 24, 2020

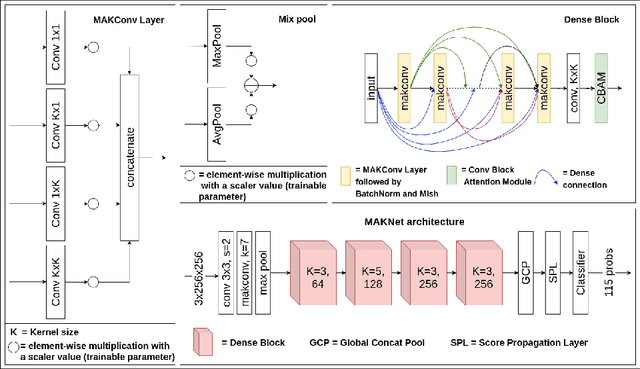

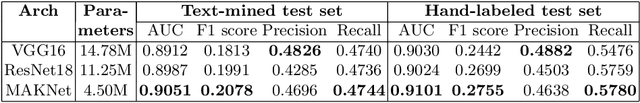

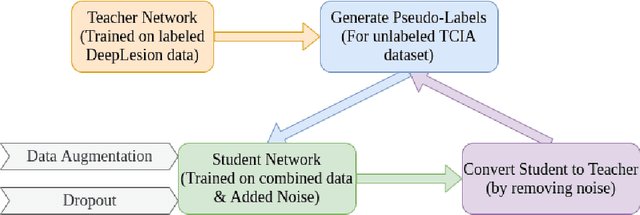

Limited availability of annotated medical imaging data poses a challenge for deep learning algorithms. Although transfer learning minimizes this hurdle in general, knowledge transfer across disparate domains is shown to be less effective. On the other hand, smaller architectures were found to be more compelling in learning better features. Consequently, we propose a lightweight architecture that uses mixed asymmetric kernels (MAKNet) to reduce the number of parameters significantly. Additionally, we train the proposed architecture using semi-supervised learning to provide pseudo-labels for a large medical dataset to assist with transfer learning. The proposed MAKNet provides better classification performance with $60 - 70\%$ less parameters than popular architectures. Experimental results also highlight the importance of domain-specific knowledge for effective transfer learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge