Domain Adaptive SiamRPN++ for Object Tracking in the Wild

Paper and Code

Jun 15, 2021

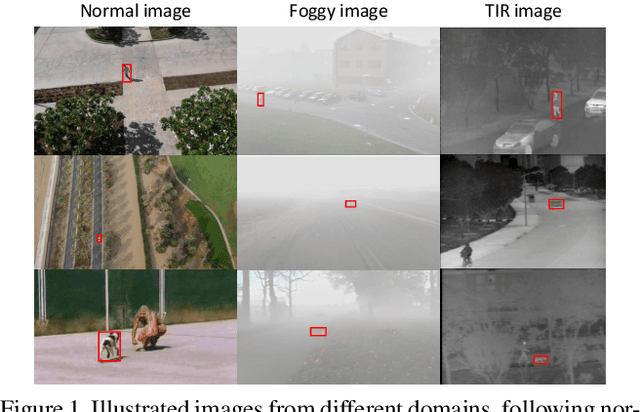

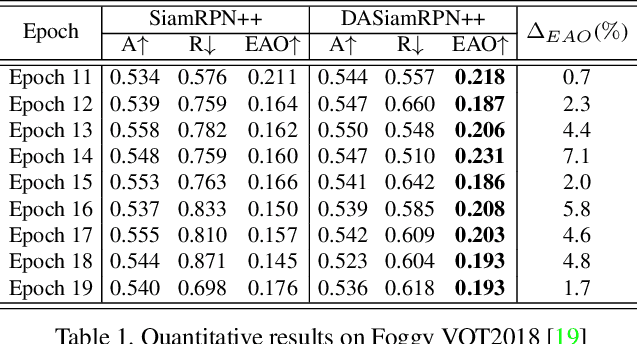

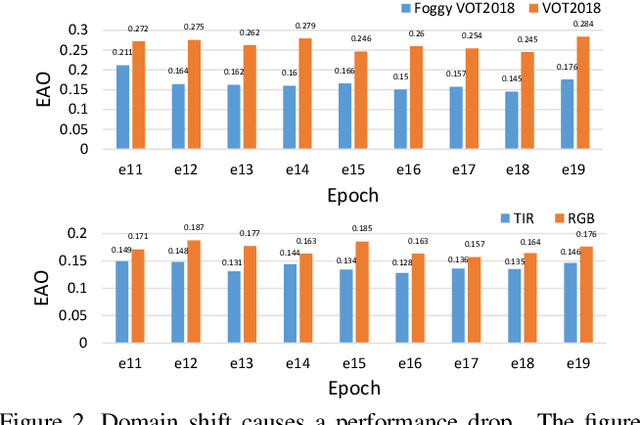

Benefit from large-scale training data, recent advances in Siamese-based object tracking have achieved compelling results on the normal sequences. Whilst Siamese-based trackers assume training and test data follow an identical distribution. Suppose there is a set of foggy or rainy test sequences, it cannot be guaranteed that the trackers trained on the normal images perform well on the data belonging to other domains. The problem of domain shift among training and test data has already been discussed in object detection and semantic segmentation areas, which, however, has not been investigated for visual tracking. To this end, based on SiamRPN++, we introduce a Domain Adaptive SiamRPN++, namely DASiamRPN++, to improve the cross-domain transferability and robustness of a tracker. Inspired by A-distance theory, we present two domain adaptive modules, Pixel Domain Adaptation (PDA) and Semantic Domain Adaptation (SDA). The PDA module aligns the feature maps of template and search region images to eliminate the pixel-level domain shift caused by weather, illumination, etc. The SDA module aligns the feature representations of the tracking target's appearance to eliminate the semantic-level domain shift. PDA and SDA modules reduce the domain disparity by learning domain classifiers in an adversarial training manner. The domain classifiers enforce the network to learn domain-invariant feature representations. Extensive experiments are performed on the standard datasets of two different domains, including synthetic foggy and TIR sequences, which demonstrate the transferability and domain adaptability of the proposed tracker.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge