Distribution-Agnostic Model-Agnostic Meta-Learning

Paper and Code

Feb 12, 2020

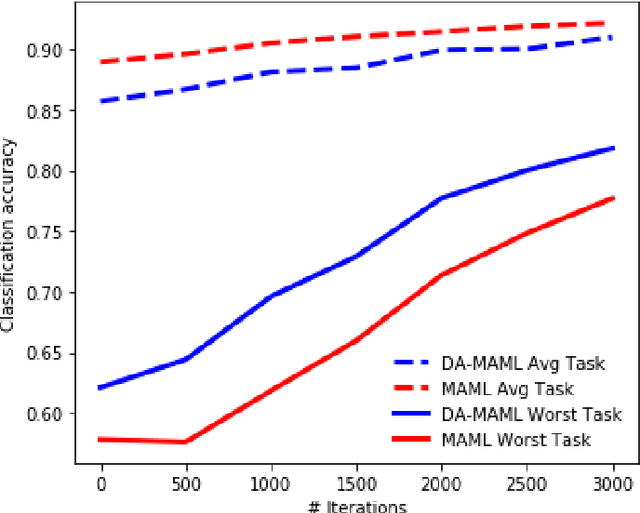

The Model-Agnostic Meta-Learning (MAML) algorithm \citep{finn2017model} has been celebrated for its efficiency and generality, as it has demonstrated success in quickly learning the parameters of an arbitrary learning model. However, MAML implicitly assumes that the tasks come from a particular distribution, and optimizes the expected (or sample average) loss over tasks drawn from this distribution. Here, we amend this limitation of MAML by reformulating the objective function as a min-max problem, where the maximization is over the set of possible distributions over tasks. Our proposed algorithm is the first distribution-agnostic and model-agnostic meta-learning method, and we show that it converges to an $\epsilon$-accurate point at the rate of $\mathcal{O}(1/\epsilon^2)$ in the convex setting and to an $(\epsilon, \delta)$-stationary point at the rate of $\mathcal{O}(\max\{1/\epsilon^5, 1/\delta^5\})$ in nonconvex settings. We also provide numerical experiments that demonstrate the worst-case superiority of our algorithm in comparison to MAML.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge