Distributed Estimation of Gaussian Correlations

Paper and Code

Jun 25, 2018

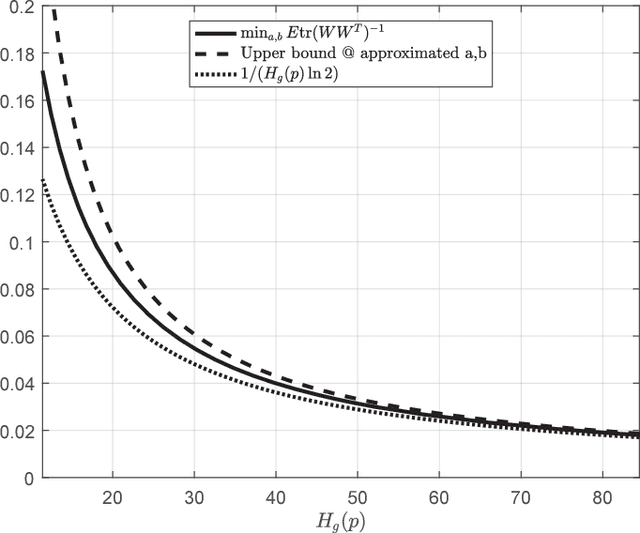

We study a distributed estimation problem in which two remotely located parties, Alice and Bob, observe an unlimited number of i.i.d. samples corresponding to two different parts of a random vector. Alice can send $k$ bits on average to Bob, who in turn wants to estimate the cross-correlation matrix between the two parts of the vector. In the case where the parties observe jointly Gaussian scalar random variables with an unknown correlation $\rho$, we obtain two constructive and simple unbiased estimators attaining a variance of $(1-\rho^2)/(2k\ln 2)$, which coincides with a known but non-constructive random coding result of Zhang and Berger. We extend our approach to the vector Gaussian case, which has not been treated before, and construct an estimator that is uniformly better than the scalar estimator applied separately to each of the correlations. We then show that the Gaussian performance can essentially be attained even when the distribution is completely unknown. This in particular implies that in the general problem of distributed correlation estimation, the variance can decay at least as $O(1/k)$ with the number of transmitted bits. This behavior, however, is not tight: we give an example of a rich family of distributions for which local samples reveal essentially nothing about the correlations, and where a slightly modified estimator attains a variance of $2^{-\Omega(k)}$.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge