Distilling Reinforcement Learning Policies for Interpretable Robot Locomotion: Gradient Boosting Machines and Symbolic Regression

Paper and Code

Mar 21, 2024

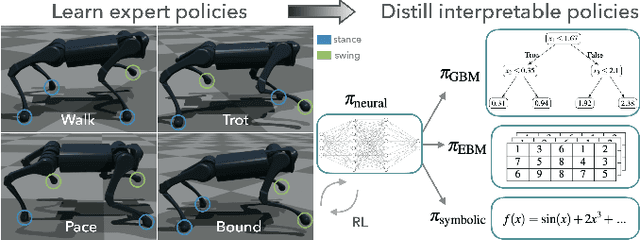

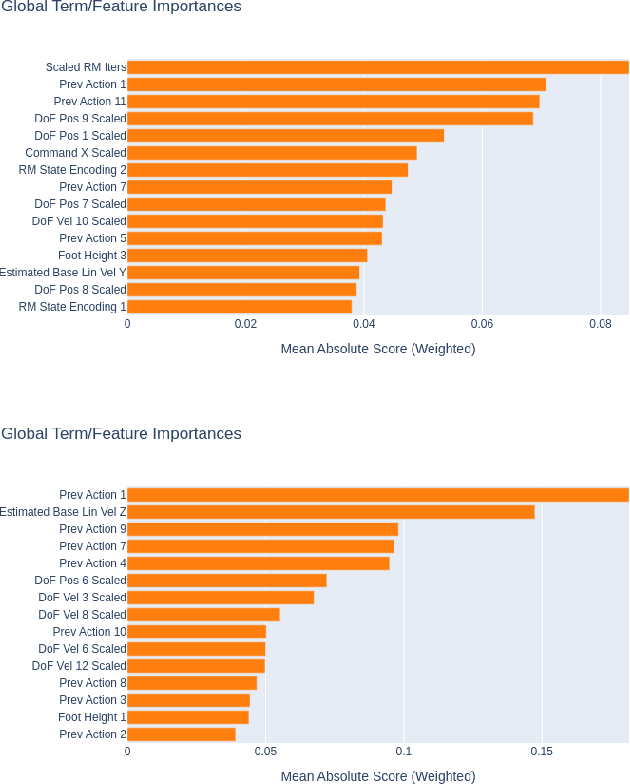

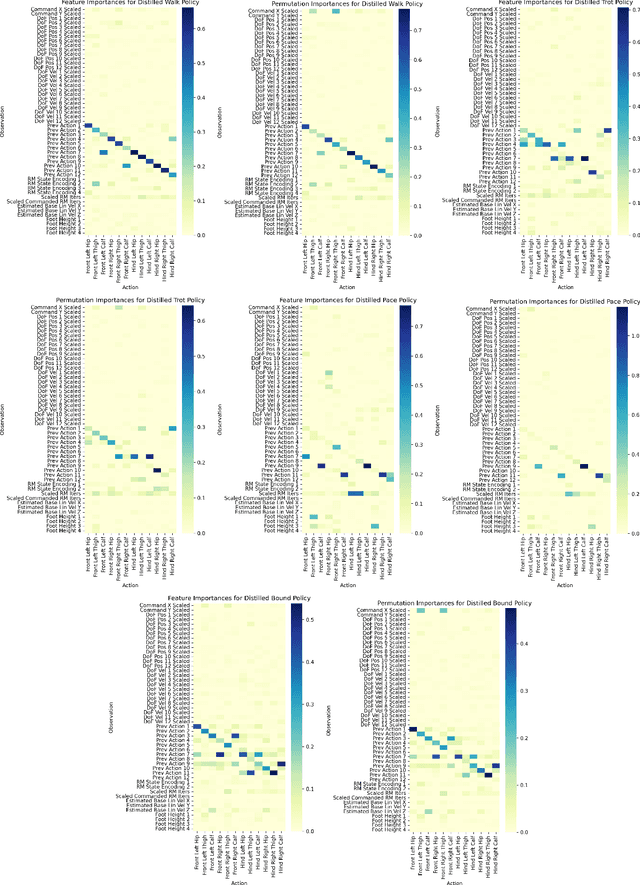

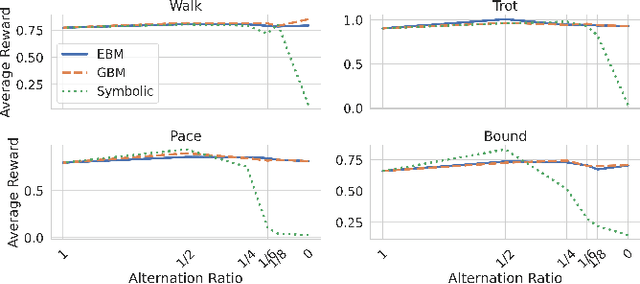

Recent advancements in reinforcement learning (RL) have led to remarkable achievements in robot locomotion capabilities. However, the complexity and ``black-box'' nature of neural network-based RL policies hinder their interpretability and broader acceptance, particularly in applications demanding high levels of safety and reliability. This paper introduces a novel approach to distill neural RL policies into more interpretable forms using Gradient Boosting Machines (GBMs), Explainable Boosting Machines (EBMs) and Symbolic Regression. By leveraging the inherent interpretability of generalized additive models, decision trees, and analytical expressions, we transform opaque neural network policies into more transparent ``glass-box'' models. We train expert neural network policies using RL and subsequently distill them into (i) GBMs, (ii) EBMs, and (iii) symbolic policies. To address the inherent distribution shift challenge of behavioral cloning, we propose to use the Dataset Aggregation (DAgger) algorithm with a curriculum of episode-dependent alternation of actions between expert and distilled policies, to enable efficient distillation of feedback control policies. We evaluate our approach on various robot locomotion gaits -- walking, trotting, bounding, and pacing -- and study the importance of different observations in joint actions for distilled policies using various methods. We train neural expert policies for 205 hours of simulated experience and distill interpretable policies with only 10 minutes of simulated interaction for each gait using the proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge