Discriminability Distillation in Group Representation Learning

Paper and Code

Sep 01, 2020

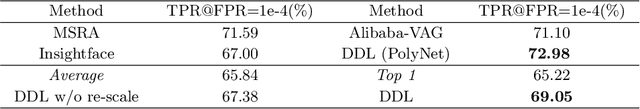

Learning group representation is a commonly concerned issue in tasks where the basic unit is a group, set, or sequence. Previously, the research community tries to tackle it by aggregating the elements in a group based on an indicator either defined by humans such as the quality and saliency, or generated by a black box such as the attention score. This article provides a more essential and explicable view. We claim the most significant indicator to show whether the group representation can be benefited from one of its element is not the quality or an inexplicable score, but the discriminability w.r.t. the model. We explicitly design the discrimiability using embedded class centroids on a proxy set. We show the discrimiability knowledge has good properties that can be distilled by a light-weight distillation network and can be generalized on the unseen target set. The whole procedure is denoted as discriminability distillation learning (DDL). The proposed DDL can be flexibly plugged into many group-based recognition tasks without influencing the original training procedures. Comprehensive experiments on various tasks have proven the effectiveness of DDL for both accuracy and efficiency. Moreover, it pushes forward the state-of-the-art results on these tasks by an impressive margin.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge