Dimensionality Reduction Flows

Paper and Code

Aug 05, 2019

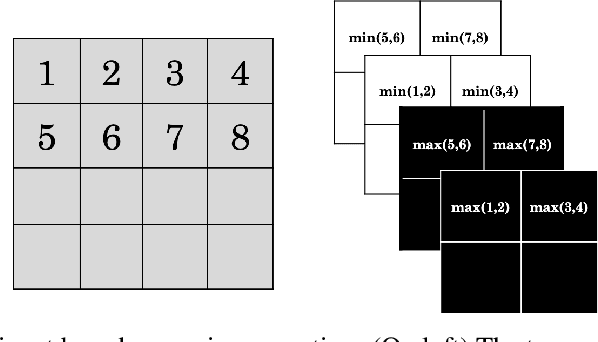

Deep generative modelling using flows has gained popularity owing to the tractable exact log-likelihood estimation with efficient training and synthesis process. Trained flow models carry rich information about the structure and local variance in input data. However, a bottleneck for flow models to scale with increasing dimensions is that the latent space has same size as the high-dimensional input space. In this paper, we propose methods to reduce the latent space dimension of flow models. Our first approach includes replacing standard high dimensional prior with a learned prior from a low dimensional noise space. Further improving to achieve exact log-likelihood with reduced dimensionality, our second approach presents an improved multi-scale architecture (Dinh et al., 2016) via likelihood contribution based factorization of dimensions. Using our method over state-of-the-art flow models, we demonstrate improvements in log-likelihood score on standard image benchmarks. Our work ventures a data dependent factorization scheme which is more efficient than static counterparts in prior works.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge