Diffusion is All You Need for Learning on Surfaces

Paper and Code

Dec 01, 2020

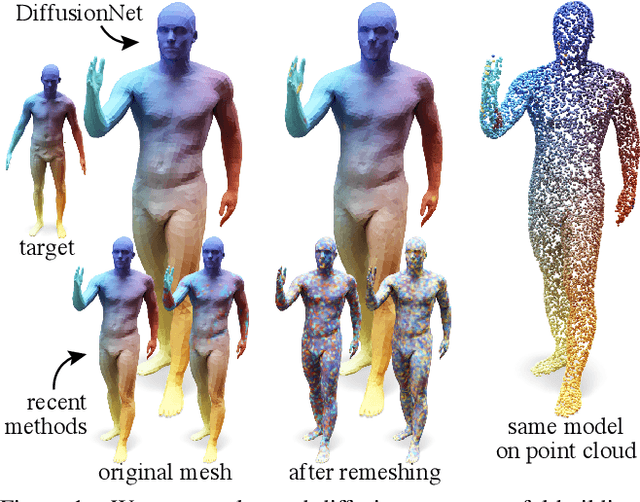

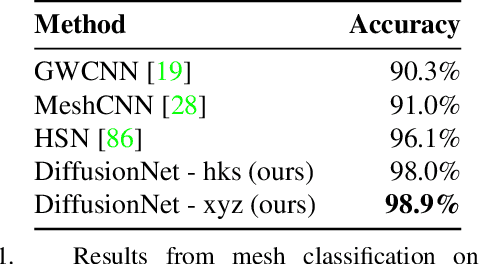

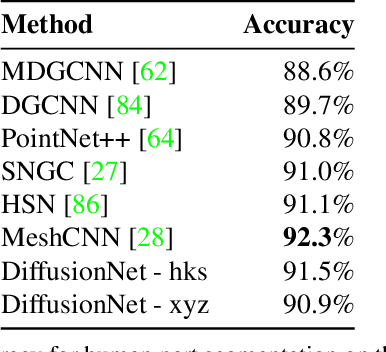

We introduce a new approach to deep learning on 3D surfaces such as meshes or point clouds. Our key insight is that a simple learned diffusion layer can spatially share data in a principled manner, replacing operations like convolution and pooling which are complicated and expensive on surfaces. The only other ingredients in our network are a spatial gradient operation, which uses dot-products of derivatives to encode tangent-invariant filters, and a multi-layer perceptron applied independently at each point. The resulting architecture, which we call DiffusionNet, is remarkably simple, efficient, and scalable. Continuously optimizing for spatial support avoids the need to pick neighborhood sizes or filter widths a priori, or worry about their impact on network size/training time. Furthermore, the principled, geometric nature of these networks makes them agnostic to the underlying representation and insensitive to discretization. In practice, this means significant robustness to mesh sampling, and even the ability to train on a mesh and evaluate on a point cloud. Our experiments demonstrate that these networks achieve state-of-the-art results for a variety of tasks on both meshes and point clouds, including surface classification, segmentation, and non-rigid correspondence.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge