Diffusion Asymptotics for Sequential Experiments

Paper and Code

Feb 10, 2021

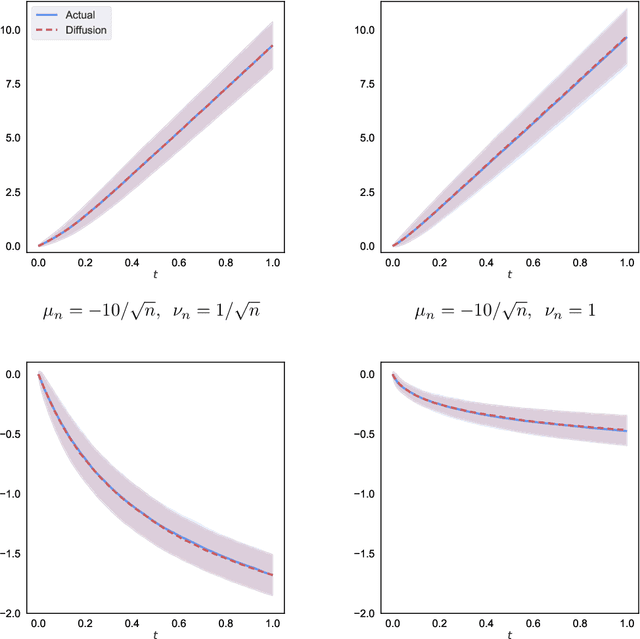

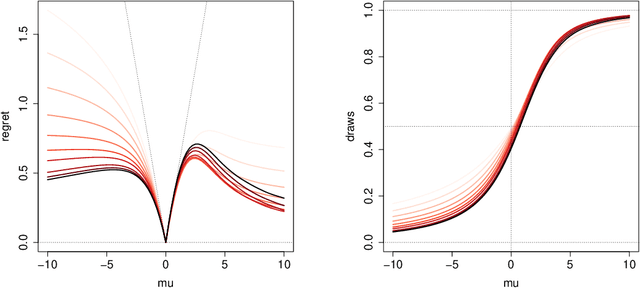

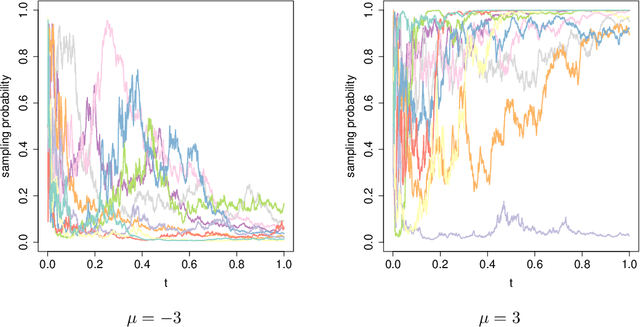

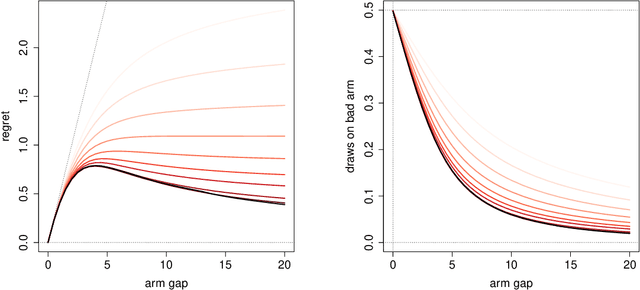

We propose a new diffusion-asymptotic analysis for sequentially randomized experiments. Rather than taking sample size $n$ to infinity while keeping the problem parameters fixed, we let the mean signal level scale to the order $1/\sqrt{n}$ so as to preserve the difficulty of the learning task as $n$ gets large. In this regime, we show that the behavior of a class of methods for sequential experimentation converges to a diffusion limit. This connection enables us to make sharp performance predictions and obtain new insights on the behavior of Thompson sampling. Our diffusion asymptotics also help resolve a discrepancy between the $\Theta(\log(n))$ regret predicted by the fixed-parameter, large-sample asymptotics on the one hand, and the $\Theta(\sqrt{n})$ regret from worst-case, finite-sample analysis on the other, suggesting that it is an appropriate asymptotic regime for understanding practical large-scale sequential experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge