Differentially Private Median Forests for Regression and Classification

Paper and Code

Jun 15, 2020

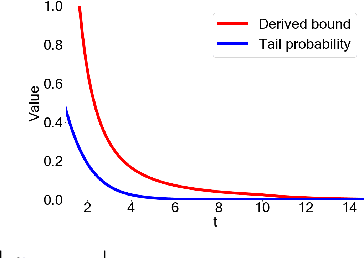

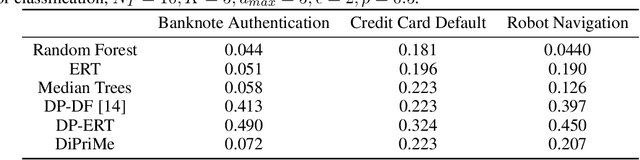

Random forests are a popular method for classification and regression due to their versatility. However, this flexibility can come at the cost of user privacy, since training random forests requires multiple data queries, often on small, identifiable subsets of the training data. Differentially private approaches based on extremely random trees reduce the number of queries, but can lead to low-occupancy leaf nodes which require the addition of large amounts of noise. In this paper, we propose DiPriMe forests, a novel tree-based ensemble method for regression and classification problems, that ensures differential privacy while maintaining high utility. We construct trees based on a privatized version of the median value of attributes, obtained via the exponential mechanism. The use of the noisy median encourages balanced leaf nodes, ensuring that the noise added to the parameter estimate at each leaf is not overly large. The resulting algorithm, which is appropriate for real or categorical covariates, exhibits high utility while ensuring differential privacy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge