Depth Estimation in Nighttime using Stereo-Consistent Cyclic Translations

Paper and Code

Sep 30, 2019

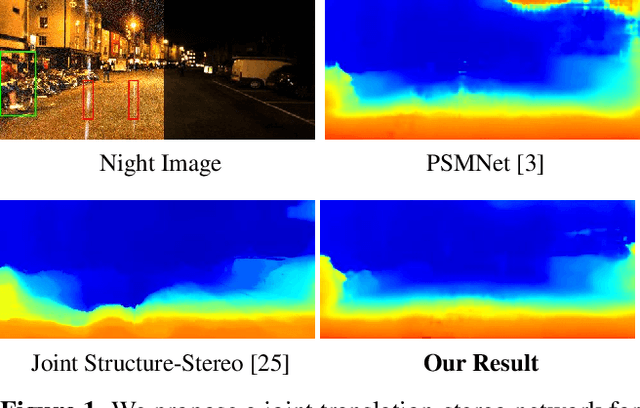

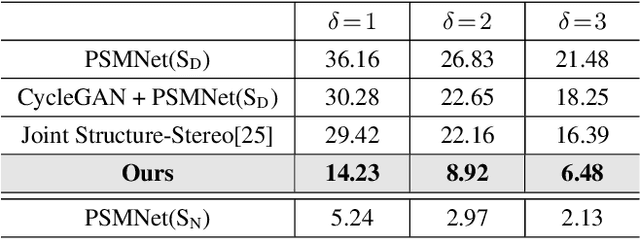

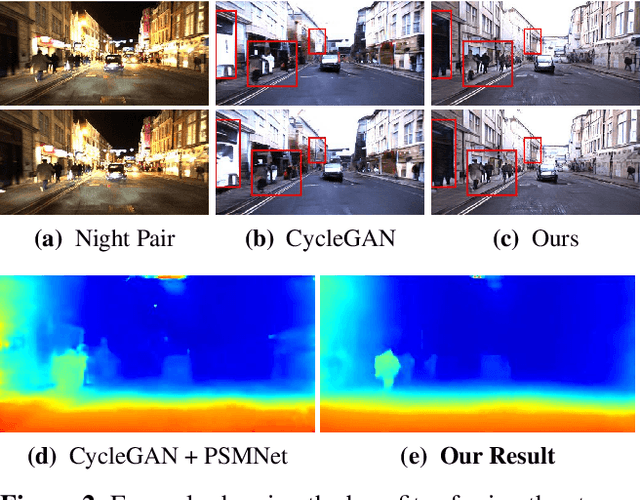

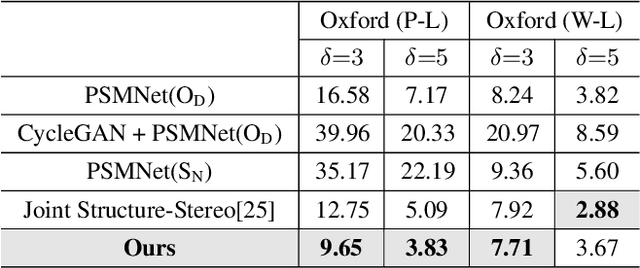

Most existing methods of depth from stereo are designed for daytime scenes, where the lighting can be assumed to be sufficiently bright and more or less uniform. Unfortunately, this assumption does not hold for nighttime scenes, causing the existing methods to be erroneous when deployed in nighttime. Nighttime is not only about low light, but also about glow, glare, non-uniform distribution of light, etc. One of the possible solutions is to train a network on nighttime images in a fully supervised manner. However, to obtain proper disparity ground-truths that are dense, independent from glare/glow, and can have sufficiently far depth ranges is extremely intractable. In this paper, to address the problem of depth from stereo in nighttime, we introduce a joint translation and stereo network that is robust to nighttime conditions. Our method uses no direct supervision and does not require ground-truth disparities of the nighttime training images. First, we utilize a translation network that can render realistic nighttime stereo images from given daytime stereo images. Second, we train a stereo network on the rendered nighttime images using the available disparity supervision from the daytime images, and simultaneously also train the translation network to gradually improve the rendered nighttime images. We introduce a stereo-consistency constraint into our translation network to ensure that the translated pairs are stereo-consistent. Our experiments show that our joint translation-stereo network outperforms the state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge