Denoising Pre-Training and Data Augmentation Strategies for Enhanced RDF Verbalization with Transformers

Paper and Code

Dec 01, 2020

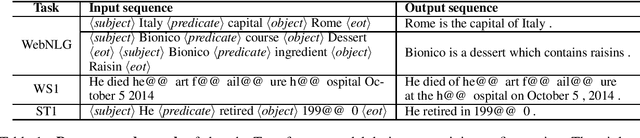

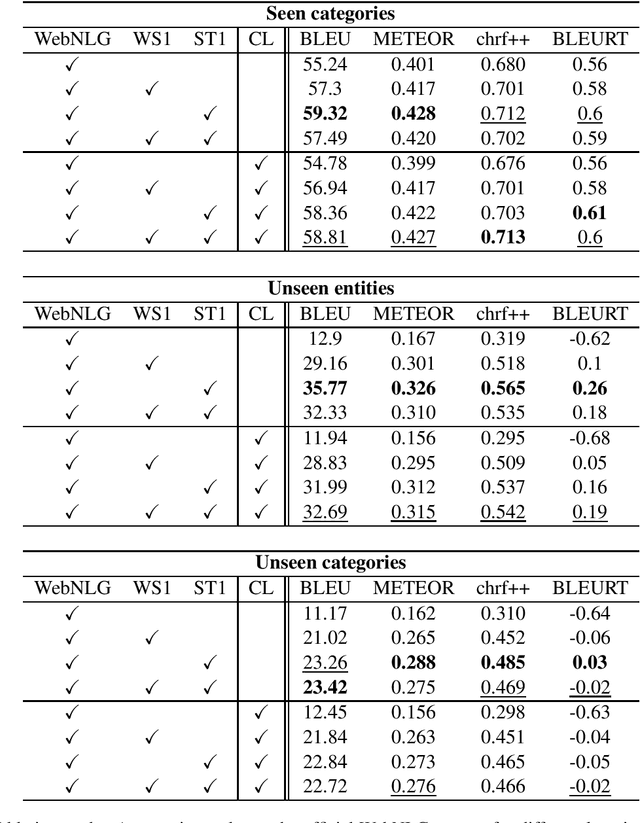

The task of verbalization of RDF triples has known a growth in popularity due to the rising ubiquity of Knowledge Bases (KBs). The formalism of RDF triples is a simple and efficient way to store facts at a large scale. However, its abstract representation makes it difficult for humans to interpret. For this purpose, the WebNLG challenge aims at promoting automated RDF-to-text generation. We propose to leverage pre-trainings from augmented data with the Transformer model using a data augmentation strategy. Our experiment results show a minimum relative increases of 3.73%, 126.05% and 88.16% in BLEU score for seen categories, unseen entities and unseen categories respectively over the standard training.

* Accepted at WebNLG+: 3rd Workshop on Natural Language Generation from

the Semantic Web

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge