Demystifying Forgetting in Language Model Fine-Tuning with Statistical Analysis of Example Associations

Paper and Code

Jun 20, 2024

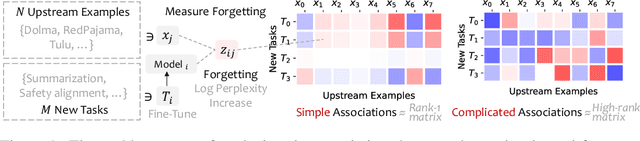

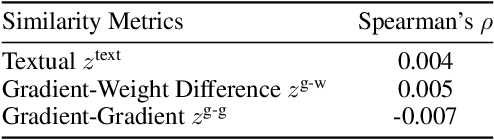

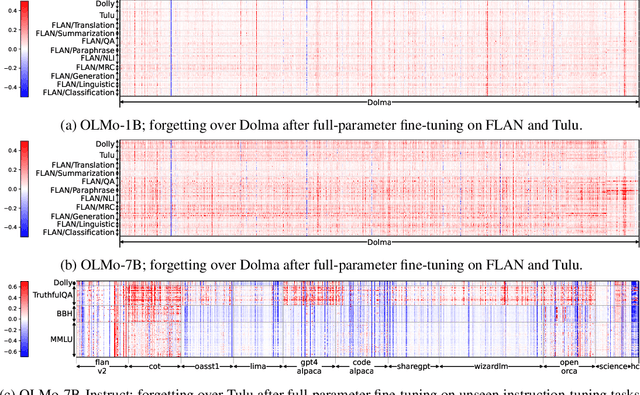

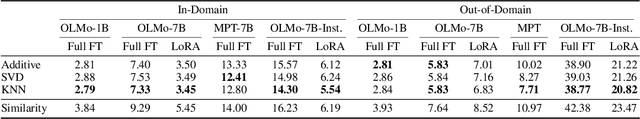

Language models (LMs) are known to suffer from forgetting of previously learned examples when fine-tuned, breaking stability of deployed LM systems. Despite efforts on mitigating forgetting, few have investigated whether, and how forgotten upstream examples are associated with newly learned tasks. Insights on such associations enable efficient and targeted mitigation of forgetting. In this paper, we empirically analyze forgetting that occurs in $N$ upstream examples while the model learns $M$ new tasks and visualize their associations with a $M \times N$ matrix. We empirically demonstrate that the degree of forgetting can often be approximated by simple multiplicative contributions of the upstream examples and newly learned tasks. We also reveal more complicated patterns where specific subsets of examples are forgotten with statistics and visualization. Following our analysis, we predict forgetting that happens on upstream examples when learning a new task with matrix completion over the empirical associations, outperforming prior approaches that rely on trainable LMs. Project website: https://inklab.usc.edu/lm-forgetting-prediction/

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge