Deletion-Robust Submodular Maximization at Scale

Paper and Code

Nov 21, 2017

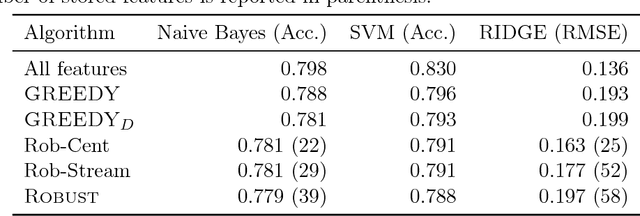

Can we efficiently extract useful information from a large user-generated dataset while protecting the privacy of the users and/or ensuring fairness in representation. We cast this problem as an instance of a deletion-robust submodular maximization where part of the data may be deleted due to privacy concerns or fairness criteria. We propose the first memory-efficient centralized, streaming, and distributed methods with constant-factor approximation guarantees against any number of adversarial deletions. We extensively evaluate the performance of our algorithms against prior state-of-the-art on real-world applications, including (i) Uber-pick up locations with location privacy constraints; (ii) feature selection with fairness constraints for income prediction and crime rate prediction; and (iii) robust to deletion summarization of census data, consisting of 2,458,285 feature vectors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge