DeepWrinkles: Accurate and Realistic Clothing Modeling

Paper and Code

Aug 10, 2018

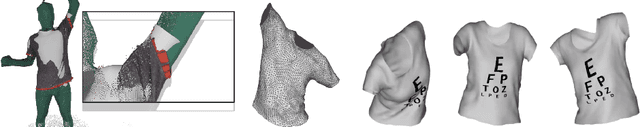

We present a novel method to generate accurate and realistic clothing deformation from real data capture. Previous methods for realistic cloth modeling mainly rely on intensive computation of physics-based simulation (with numerous heuristic parameters), while models reconstructed from visual observations typically suffer from lack of geometric details. Here, we propose an original framework consisting of two modules that work jointly to represent global shape deformation as well as surface details with high fidelity. Global shape deformations are recovered from a subspace model learned from 3D data of clothed people in motion, while high frequency details are added to normal maps created using a conditional Generative Adversarial Network whose architecture is designed to enforce realism and temporal consistency. This leads to unprecedented high-quality rendering of clothing deformation sequences, where fine wrinkles from (real) high resolution observations can be recovered. In addition, as the model is learned independently from body shape and pose, the framework is suitable for applications that require retargeting (e.g., body animation). Our experiments show original high quality results with a flexible model. We claim an entirely data-driven approach to realistic cloth wrinkle generation is possible.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge