Deep versus Wide: An Analysis of Student Architectures for Task-Agnostic Knowledge Distillation of Self-Supervised Speech Models

Paper and Code

Jul 14, 2022

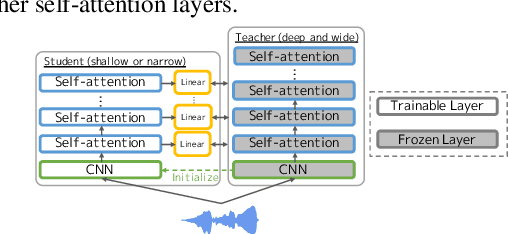

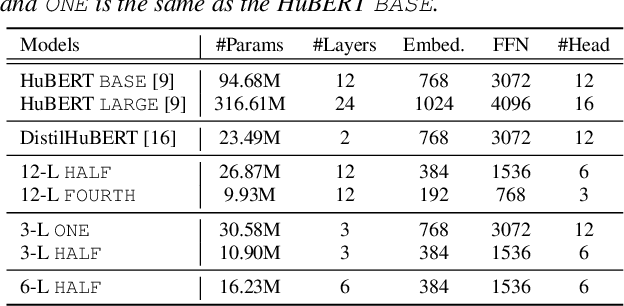

Self-supervised learning (SSL) is seen as a very promising approach with high performance for several speech downstream tasks. Since the parameters of SSL models are generally so large that training and inference require a lot of memory and computational cost, it is desirable to produce compact SSL models without a significant performance degradation by applying compression methods such as knowledge distillation (KD). Although the KD approach is able to shrink the depth and/or width of SSL model structures, there has been little research on how varying the depth and width impacts the internal representation of the small-footprint model. This paper provides an empirical study that addresses the question. We investigate the performance on SUPERB while varying the structure and KD methods so as to keep the number of parameters constant; this allows us to analyze the contribution of the representation introduced by varying the model architecture. Experiments demonstrate that a certain depth is essential for solving content-oriented tasks (e.g. automatic speech recognition) accurately, whereas a certain width is necessary for achieving high performance on several speaker-oriented tasks (e.g. speaker identification). Based on these observations, we identify, for SUPERB, a more compressed model with better performance than previous studies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge