Deep Reinforcement Learning for Distributed Dynamic Power Allocation in Wireless Networks

Paper and Code

Aug 01, 2018

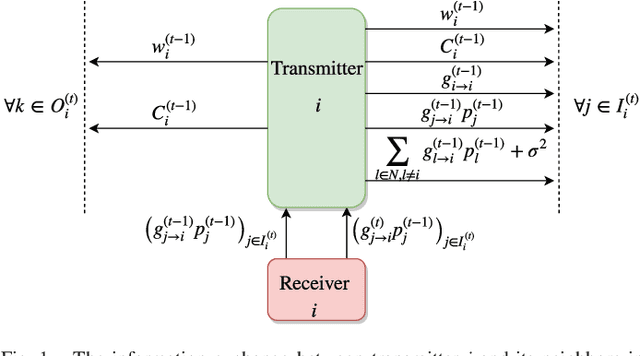

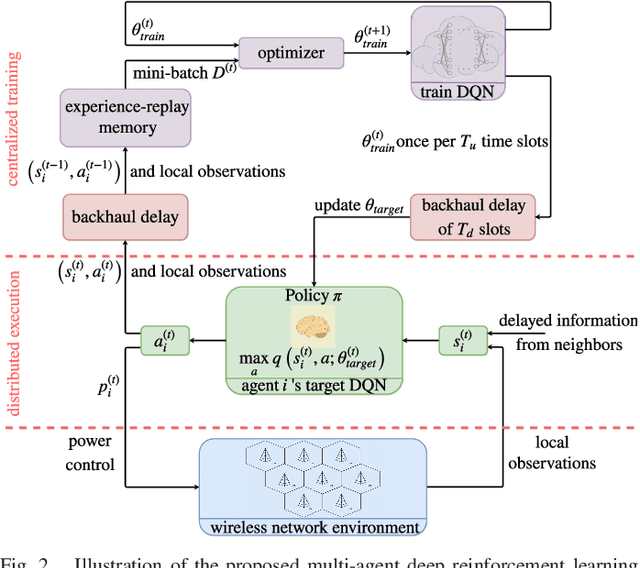

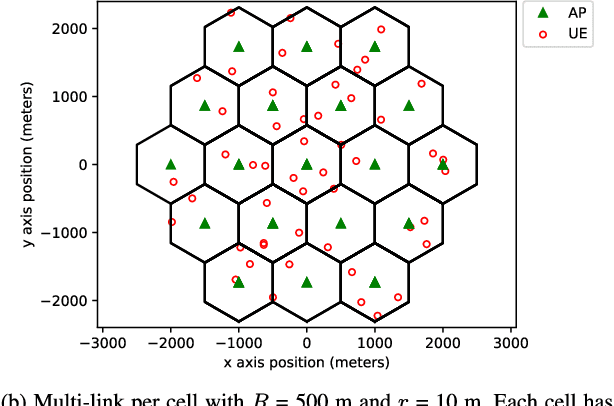

This work demonstrates the potential of deep reinforcement learning techniques for transmit power control in emerging and future wireless networks. Various techniques have been proposed in the literature to find near-optimal power allocations, often by solving a challenging optimization problem. Most of these algorithms are not scalable to large networks in real-world scenarios because of their computational complexity and instantaneous cross-cell channel state information (CSI) requirement. In this paper, a model-free distributed dynamic power allocation scheme is developed based on deep reinforcement learning. Each transmitter collects CSI and quality of service (QoS) information from several neighbors and adapts its own transmit power accordingly. The objective is to maximize a weighted sum-rate utility function, which can be particularized to achieve maximum sum-rate or proportionally fair scheduling (with weights that are changing over time). Both random variations and delays in the CSI are inherently addressed using deep Q-learning. For a typical network architecture, the proposed algorithm is shown to achieve near-optimal power allocation in real time based on delayed CSI measurements available to the agents. This work indicates that deep reinforcement learning based radio resource management can be very fast and deliver highly competitive performance, especially in practical scenarios where the system model is inaccurate and CSI delay is non-negligible.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge