Deep Latent-Variable Kernel Learning

Paper and Code

May 18, 2020

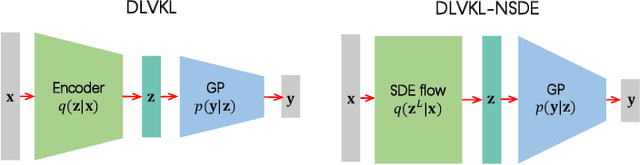

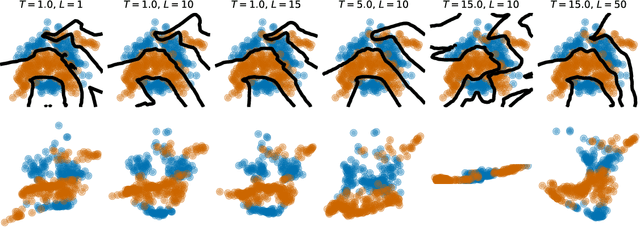

Deep kernel learning (DKL) leverages the connection between Gaussian process (GP) and neural networks (NN) to build an end-to-end, hybrid model. It combines the capability of NN to learn rich representations under massive data and the non-parametric property of GP to achieve automatic calibration. However, the deterministic encoder may weaken the model calibration of the following GP part, especially on small datasets, due to the free latent representation. We therefore present a complete deep latent-variable kernel learning (DLVKL) model wherein the latent variables perform stochastic encoding for regularized representation. Theoretical analysis however indicates that the DLVKL with i.i.d. prior for latent variables suffers from posterior collapse and degenerates to a constant predictor. Hence, we further enhance the DLVKL from two aspects: (i) the complicated variational posterior through neural stochastic differential equation (NSDE) to reduce the divergence gap, and (ii) the hybrid prior taking knowledge from both the SDE prior and the posterior to arrive at a flexible trade-off. Intensive experiments imply that the DLVKL-NSDE performs similarly to the well calibrated GP on small datasets, and outperforms existing deep GPs on large datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge