Deep Exposure Fusion with Deghosting via Homography Estimation and Attention Learning

Paper and Code

Apr 20, 2020

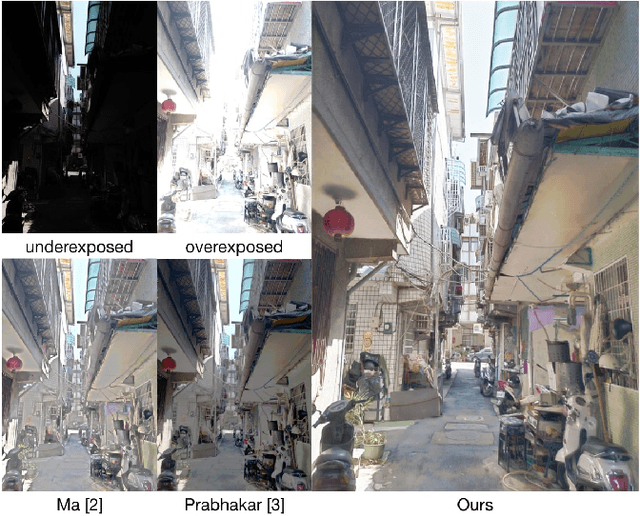

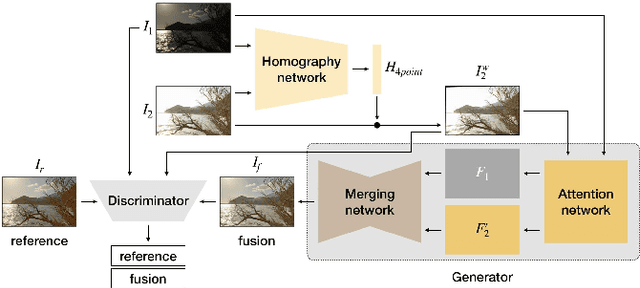

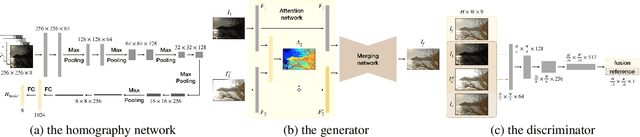

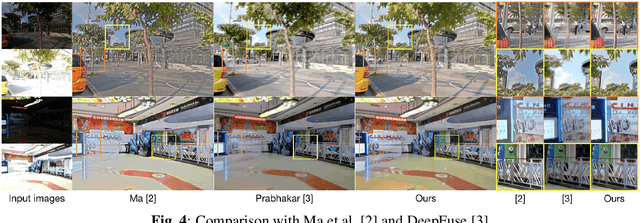

Modern cameras have limited dynamic ranges and often produce images with saturated or dark regions using a single exposure. Although the problem could be addressed by taking multiple images with different exposures, exposure fusion methods need to deal with ghosting artifacts and detail loss caused by camera motion or moving objects. This paper proposes a deep network for exposure fusion. For reducing the potential ghosting problem, our network only takes two images, an underexposed image and an overexposed one. Our network integrates together homography estimation for compensating camera motion, attention mechanism for correcting remaining misalignment and moving pixels, and adversarial learning for alleviating other remaining artifacts. Experiments on real-world photos taken using handheld mobile phones show that the proposed method can generate high-quality images with faithful detail and vivid color rendition in both dark and bright areas.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge