Deep End-to-End Survival Analysis with Temporal Consistency

Paper and Code

Oct 09, 2024

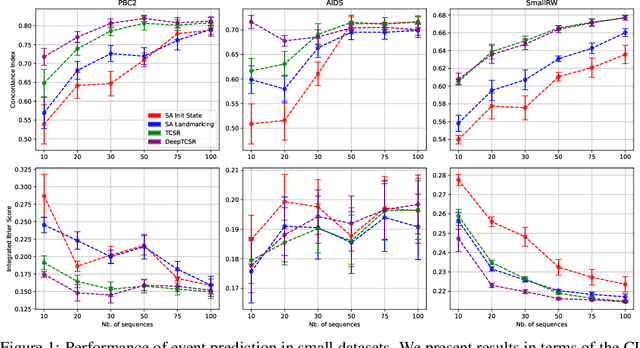

In this study, we present a novel Survival Analysis algorithm designed to efficiently handle large-scale longitudinal data. Our approach draws inspiration from Reinforcement Learning principles, particularly the Deep Q-Network paradigm, extending Temporal Learning concepts to Survival Regression. A central idea in our method is temporal consistency, a hypothesis that past and future outcomes in the data evolve smoothly over time. Our framework uniquely incorporates temporal consistency into large datasets by providing a stable training signal that captures long-term temporal relationships and ensures reliable updates. Additionally, the method supports arbitrarily complex architectures, enabling the modeling of intricate temporal dependencies, and allows for end-to-end training. Through numerous experiments we provide empirical evidence demonstrating our framework's ability to exploit temporal consistency across datasets of varying sizes. Moreover, our algorithm outperforms benchmarks on datasets with long sequences, demonstrating its ability to capture long-term patterns. Finally, ablation studies show how our method enhances training stability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge