Deciphering Implicit Hate: Evaluating Automated Detection Algorithms for Multimodal Hate

Paper and Code

Jun 10, 2021

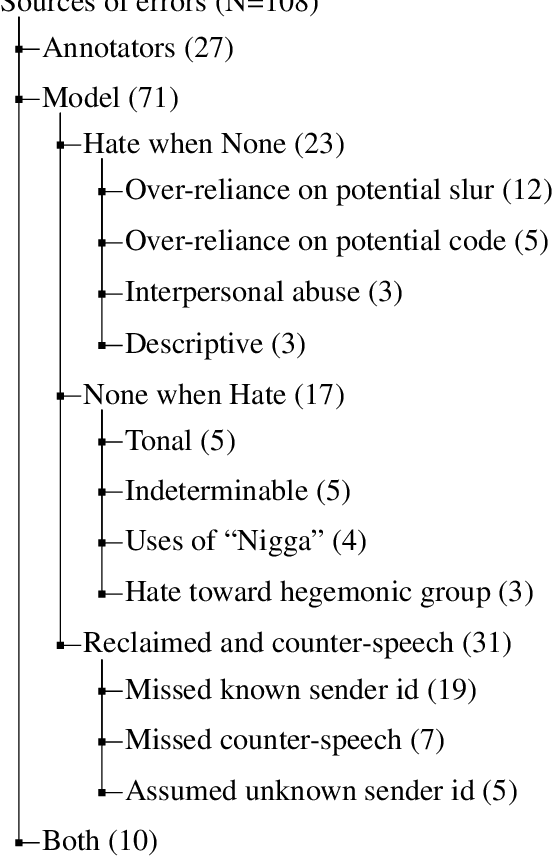

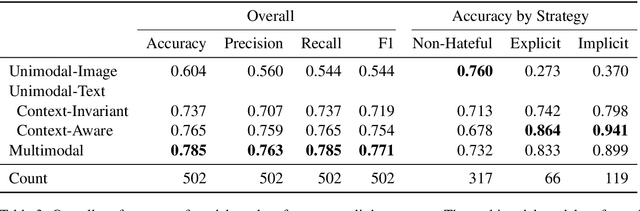

Accurate detection and classification of online hate is a difficult task. Implicit hate is particularly challenging as such content tends to have unusual syntax, polysemic words, and fewer markers of prejudice (e.g., slurs). This problem is heightened with multimodal content, such as memes (combinations of text and images), as they are often harder to decipher than unimodal content (e.g., text alone). This paper evaluates the role of semantic and multimodal context for detecting implicit and explicit hate. We show that both text- and visual- enrichment improves model performance, with the multimodal model (0.771) outperforming other models' F1 scores (0.544, 0.737, and 0.754). While the unimodal-text context-aware (transformer) model was the most accurate on the subtask of implicit hate detection, the multimodal model outperformed it overall because of a lower propensity towards false positives. We find that all models perform better on content with full annotator agreement and that multimodal models are best at classifying the content where annotators disagree. To conduct these investigations, we undertook high-quality annotation of a sample of 5,000 multimodal entries. Tweets were annotated for primary category, modality, and strategy. We make this corpus, along with the codebook, code, and final model, freely available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge