Deceptive Kernel Function on Observations of Discrete POMDP

Paper and Code

Aug 12, 2020

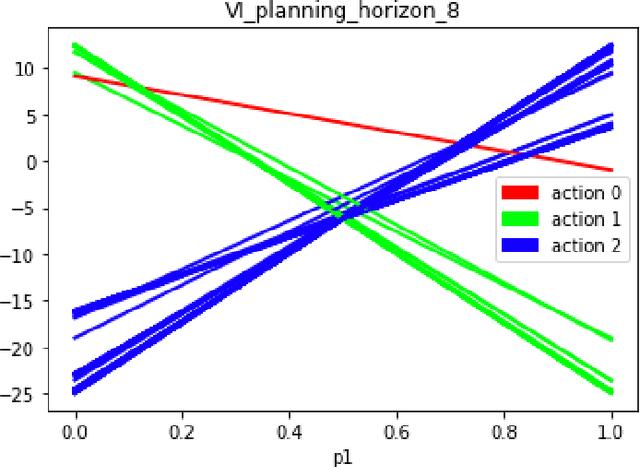

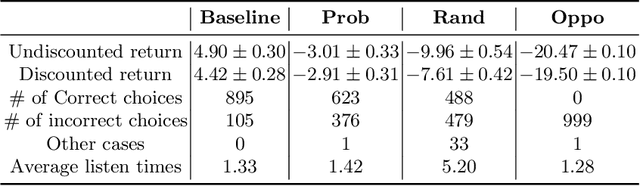

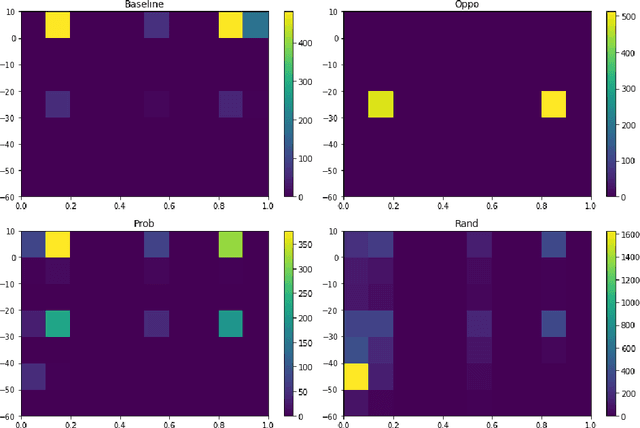

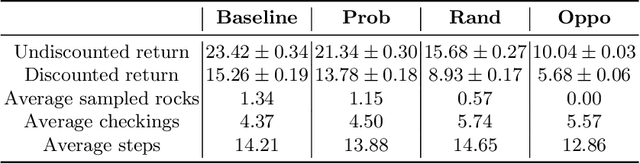

This paper studies the deception applied on agent in a partially observable Markov decision process. We introduce deceptive kernel function (the kernel) applied to agent's observations in a discrete POMDP. Based on value iteration, value function approximation and POMCP three characteristic algorithms used by agent, we analyze its belief being misled by falsified observations as the kernel's outputs and anticipate its probable threat on agent's reward and potentially other performance. We validate our expectation and explore more detrimental effects of the deception by experimenting on two POMDP problems. The result shows that the kernel applied on agent's observation can affect its belief and substantially lower its resulting rewards; meantime certain implementation of the kernel could induce other abnormal behaviors by the agent.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge