Decentralized Federated Learning: Fundamentals, State-of-the-art, Frameworks, Trends, and Challenges

Paper and Code

Nov 15, 2022

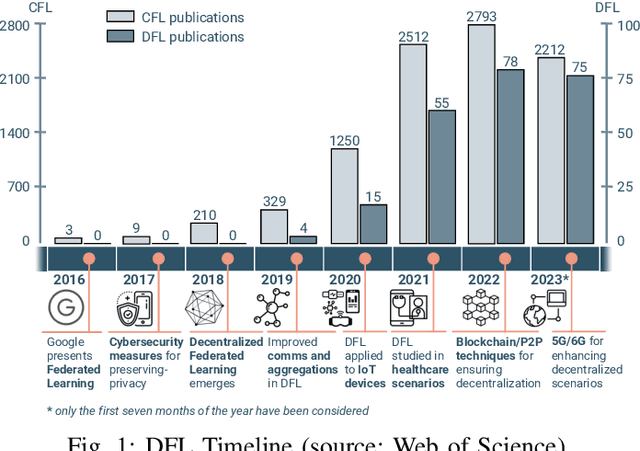

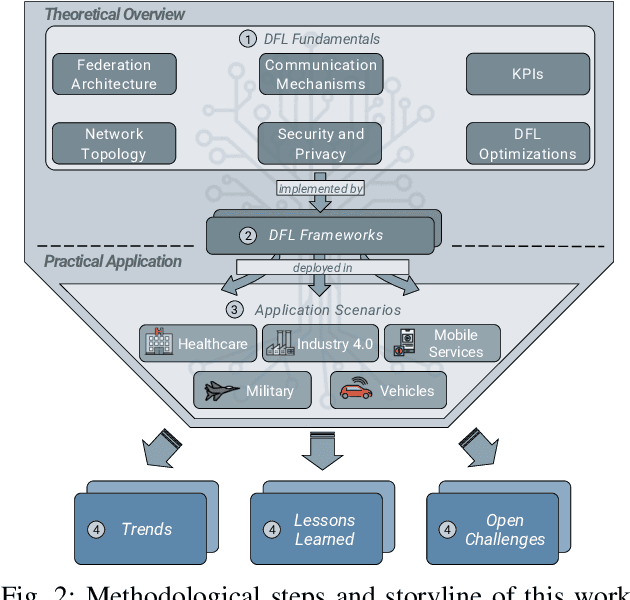

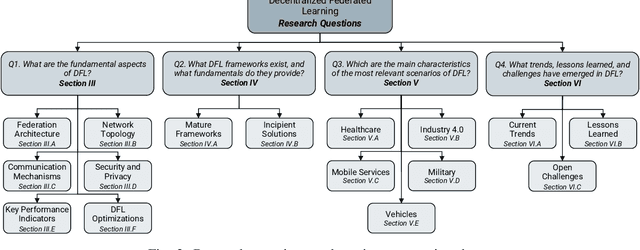

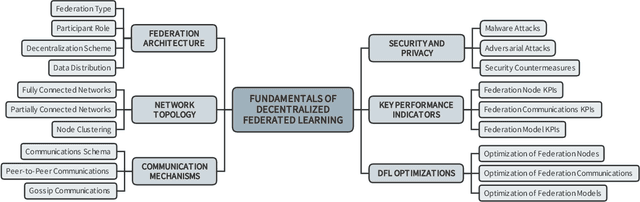

In the last decade, Federated Learning (FL) has gained relevance in training collaborative models without sharing sensitive data. Since its birth, Centralized FL (CFL) has been the most common approach in the literature, where a unique entity creates global models. However, using a centralized approach has the disadvantages of bottleneck at the server node, single point of failure, and trust needs. Decentralized Federated Learning (DFL) arose to solve these aspects by embracing the principles of data sharing minimization and decentralized model aggregation without relying on centralized architectures. However, despite the work done in DFL, the literature has not (i) studied the main fundamentals differentiating DFL and CFL; (ii) reviewed application scenarios and solutions using DFL; and (iii) analyzed DFL frameworks to create and evaluate new solutions. To this end, this article identifies and analyzes the main fundamentals of DFL in terms of federation architectures, topologies, communication mechanisms, security approaches, and key performance indicators. Additionally, the paper at hand explores existing mechanisms to optimize critical DFL fundamentals. Then, this work analyzes and compares the most used DFL application scenarios and solutions according to the fundamentals previously defined. After that, the most relevant features of the current DFL frameworks are reviewed and compared. Finally, the evolution of existing DFL solutions is analyzed to provide a list of trends, lessons learned, and open challenges.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge