Decentralized and Model-Free Federated Learning: Consensus-Based Distillation in Function Space

Paper and Code

Apr 02, 2021

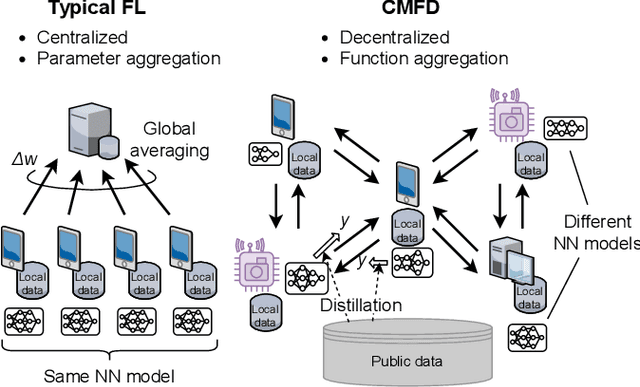

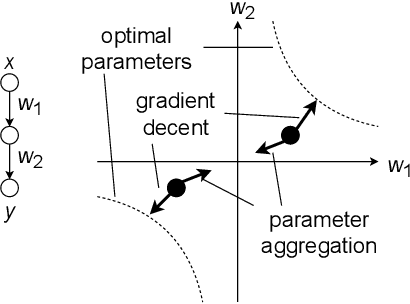

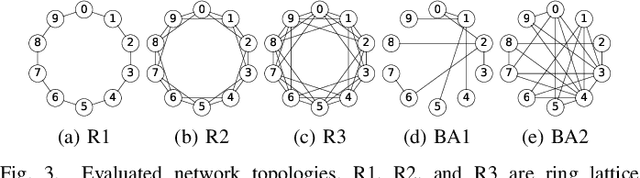

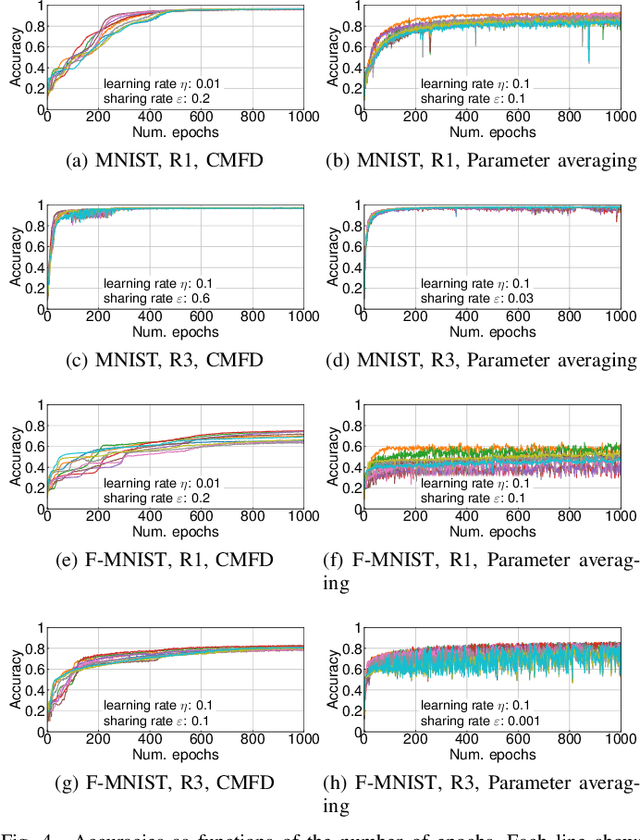

This paper proposes a decentralized FL scheme for IoE devices connected via multi-hop networks. FL has gained attention as an enabler of privacy-preserving algorithms, but it is not guaranteed that FL algorithms converge to the optimal point because of non-convexity when using decentralized parameter averaging schemes. Therefore, a distributed algorithm that converges to the optimal solution should be developed. The key idea of the proposed algorithm is to aggregate the local prediction functions, not in a parameter space but in a function space. Since machine learning tasks can be regarded as convex functional optimization problems, a consensus-based optimization algorithm achieves the global optimum if it is tailored to work in a function space. This paper at first analyzes the convergence of the proposed algorithm in a function space, which is referred to as a meta-algorithm. It is shown that spectral graph theory can be applied to the function space in a similar manner as that of numerical vectors. Then, a CMFD is developed for NN as an implementation of the meta-algorithm. CMFD leverages knowledge distillation to realize function aggregation among adjacent devices without parameter averaging. One of the advantages of CMFD is that it works even when NN models are different among the distributed learners. This paper shows that CMFD achieves higher accuracy than parameter aggregation under weakly-connected networks. The stability of CMFD is also higher than that of parameter aggregation methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge