Deadwooding: Robust Global Pruning for Deep Neural Networks

Paper and Code

Feb 17, 2022

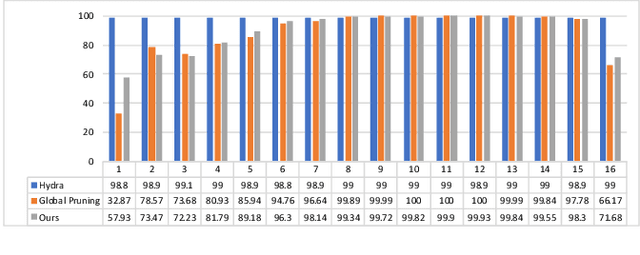

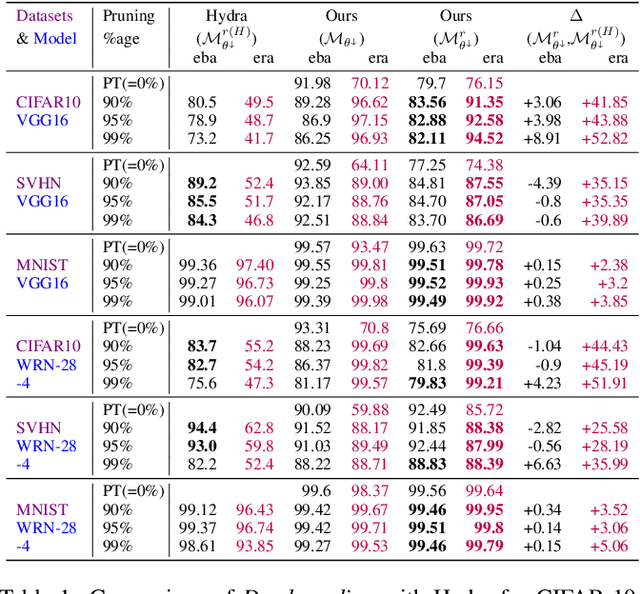

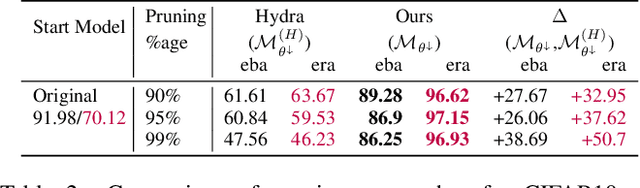

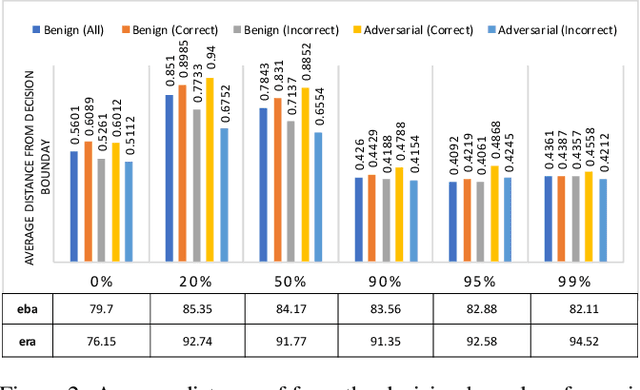

The ability of Deep Neural Networks to approximate highly complex functions is the key to their success. This benefit, however, often comes at the cost of a large model size, which challenges their deployment in resource-constrained environments. To limit this issue, pruning techniques can introduce sparsity in the models, but at the cost of accuracy and adversarial robustness. This paper addresses these critical issues and introduces Deadwooding, a novel pruning technique that exploits a Lagrangian Dual method to encourage model sparsity while retaining accuracy and ensuring robustness. The resulting model is shown to significantly outperform the state-of-the-art studies in measures of robustness and accuracy.

* 12 pages, 5 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge